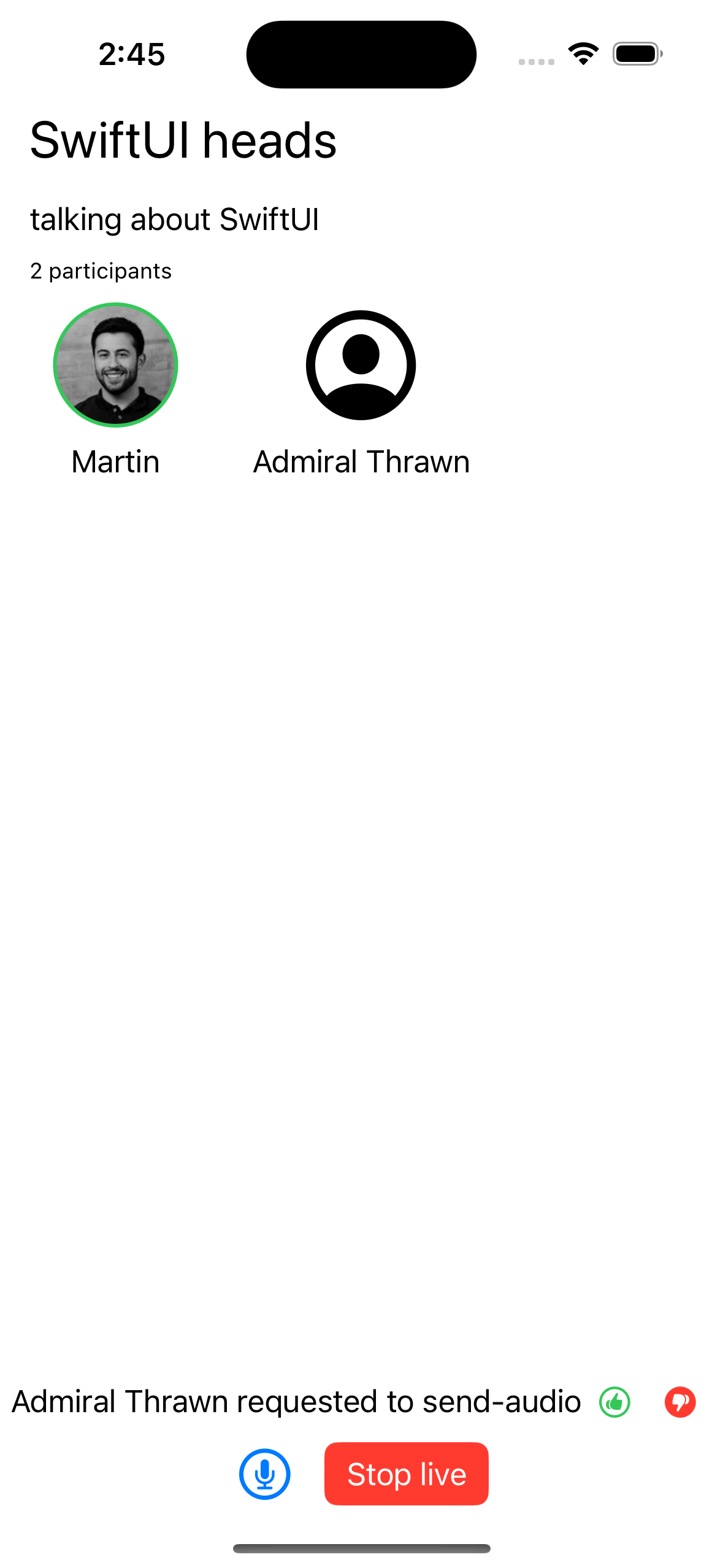

This tutorial will teach you how to build an audio room experience like Twitter Spaces or Clubhouse. The result will look like the image on the right and supports the following features:

- Backstage mode: You can start the call with your co-hosts and chat a bit before going live.

- Calls run on Stream's global edge network for optimal latency and scalability.

- No cap on how many listeners you can have in a room.

- Listeners can raise their hands and be invited by the host to speak.

- Audio tracks are sent multiple times for optimal reliability.

Time to get started building an audio-room for your app.

Step 1 - Create a New SwiftUI Application in Xcode

- Make sure you have Xcode installed and that you are running 14.3 or later.

- Open Xcode and select "Create a new Project".

- Select "iOS" as the platform and "App" as the type of Application.

- Name your project "AudioRooms" and select "SwiftUI" as the interface.

Step 2 - Install the SDK & Setup Permissions

Next, you must add our SDK dependencies to your project using Swift Package Manager from Xcode.

- Click on "Add packages..." from the File menu.

- Add https://github.com/GetStream/stream-video-swift in the search bar.

- Select "StreamVideo" and "StreamVideoSwiftUI" and then click Add Package.

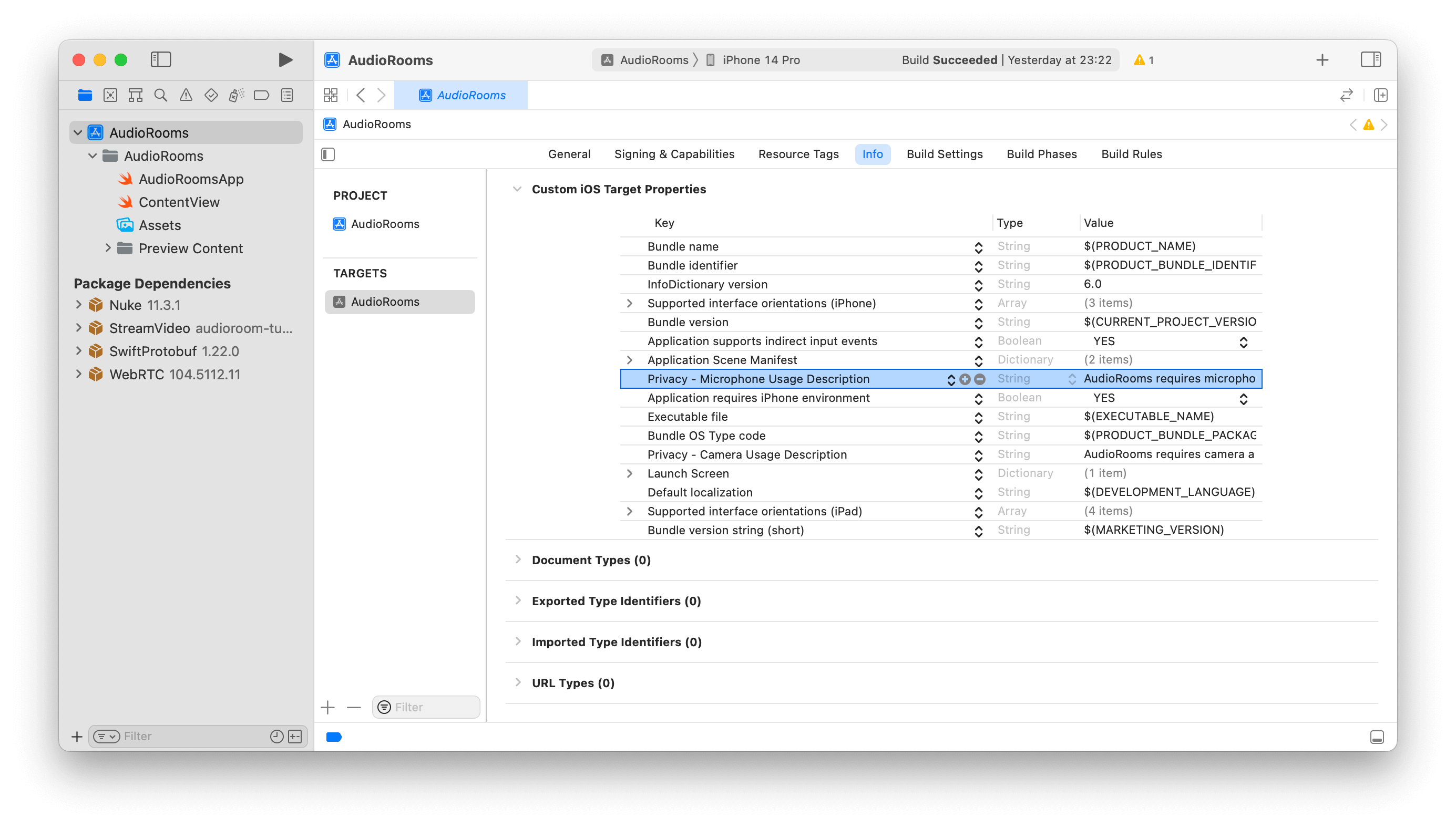

Configure App Permissions

Joining an audio room requires microphone access; you must request permission to use it in your app. Add the following key to the Info.plist file to do this.

Privacy - Microphone Usage Description- "AudioRooms requires microphone access to capture and transmit audio".

Step 3 - Create & Join a Call

Open up AudioRooms/AudioRoomsApp.swift and replace it with this code:

123456789101112131415161718192021222324252627282930313233343536373839404142434445464748495051525354555657585960616263646566import SwiftUI import StreamVideo @main struct AudioRoomsApp: App { @State var call: Call @ObservedObject var state: CallState @State private var callCreated: Bool = false private var client: StreamVideo private let apiKey: String = "REPLACE_WITH_API_KEY" private let userId: String = "REPLACE_WITH_USER_ID" private let token: String = "REPLACE_WITH_TOKEN" private let callId: String = "REPLACE_WITH_CALL_ID" init() { let user = User( id: userId, name: "Martin", // name and imageURL are used in the UI imageURL: .init(string: "https://getstream.io/static/2796a305dd07651fcceb4721a94f4505/a3911/martin-mitrevski.webp") ) // Initialize Stream Video client self.client = StreamVideo( apiKey: apiKey, user: user, token: .init(stringLiteral: token) ) // Initialize the call object let call = client.call(callType: "audio_room", callId: callId) self.call = call self.state = call.state } var body: some Scene { WindowGroup { VStack { if callCreated { Text("Audio Room \(call.callId) has \(call.state.participantCount) participants") .font(.system(size: 30)) .foregroundColor(.blue) } else { Text("loading...") } }.task { Task { guard !callCreated else { return } try await call.join( create: true, options: .init( members: [ ], custom: [ "title": .string("SwiftUI heads"), "description": .string("Talking about SwiftUI") ] ) ) callCreated = true } } } } }

Let's review the example above and go over the details.

User setup. First we create a user object. You typically sync your users via a server side integration from your own backend. Alternatively, you can use guest or anonymous users.

1234let user = User( id: userId, name: "Martin" )

Client init. Next we initialize the client by passing the API Key, user and user token.

12345self.client = StreamVideo( apiKey: apiKey, user: user, token: .init(stringLiteral: token) )

Create and join call After the user and client are created, we create a call like this:

123456789101112131415self.call = client.call(callType: "audio_room", callId: callId) try await call.join( create: true, options: .init( members: [ .init(userId: "john_smith"), .init(userId: "jane_doe"), ], custom: [ "title": .string("SwiftUI heads"), "description": .string("Talking about SwiftUI") ] ) )

- This joins and creates a call with the type: "audio_room" and the specified

callId. - The users with id

john_smithandjane_doeare added as members to the call. - We set the

titleanddescriptioncustom fields on the call object.

Your server-side API typically generates the user token. When a user logs in to your app, you return the user token, which gives them access to the call. We have generated the token for you in the code sample above to make this tutorial easier to follow.

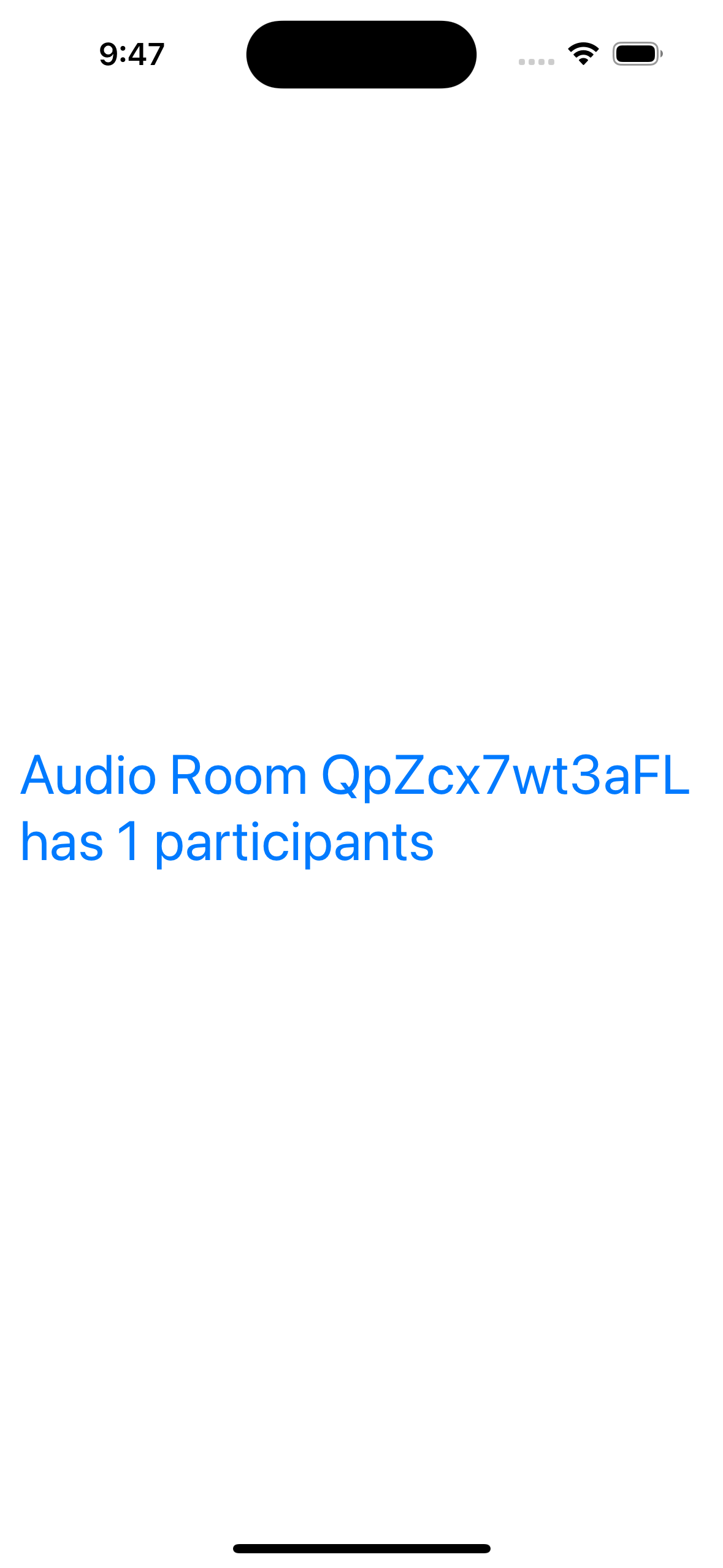

With valid credentials in place, we can join the call. When you run the app, you'll see the following:

Step 4 - Add the Audio Room UI Elements

In this next step, we'll add the following.

- Room title and description.

- Controls to toggle the live mode on/off.

- A list of participants with their speaking status.

Room Title & Description

Let's create the components we need to render this and add them to the main app view.

123456789101112131415161718192021222324252627282930import SwiftUI import StreamVideo struct DescriptionView: View { var title: String? var description: String? var participants: [CallParticipant] var body: some View { VStack { VStack { Text("\(title ?? "")") .font(.title) .frame(maxWidth: .infinity, alignment: .leading) .lineLimit(1) .padding([.bottom], 8) Text("\(description ?? "")") .font(.body) .frame(maxWidth: .infinity, alignment: .leading) .lineLimit(1) .padding([.bottom], 4) Text("\(participants.count) participants") .font(.caption) .frame(maxWidth: .infinity, alignment: .leading) }.padding([.leading, .trailing]) } } }

123456789101112import SwiftUI import StreamVideo struct ParticipantsView: View { var participants: [CallParticipant] var body: some View { Spacer() Text("Participants: TODO").font(.body) Spacer() } }

1234567891011import SwiftUI import StreamVideo struct ControlsView: View { @State var call: Call @ObservedObject var state: CallState var body: some View { Text("Controls: TODO").font(.body) } }

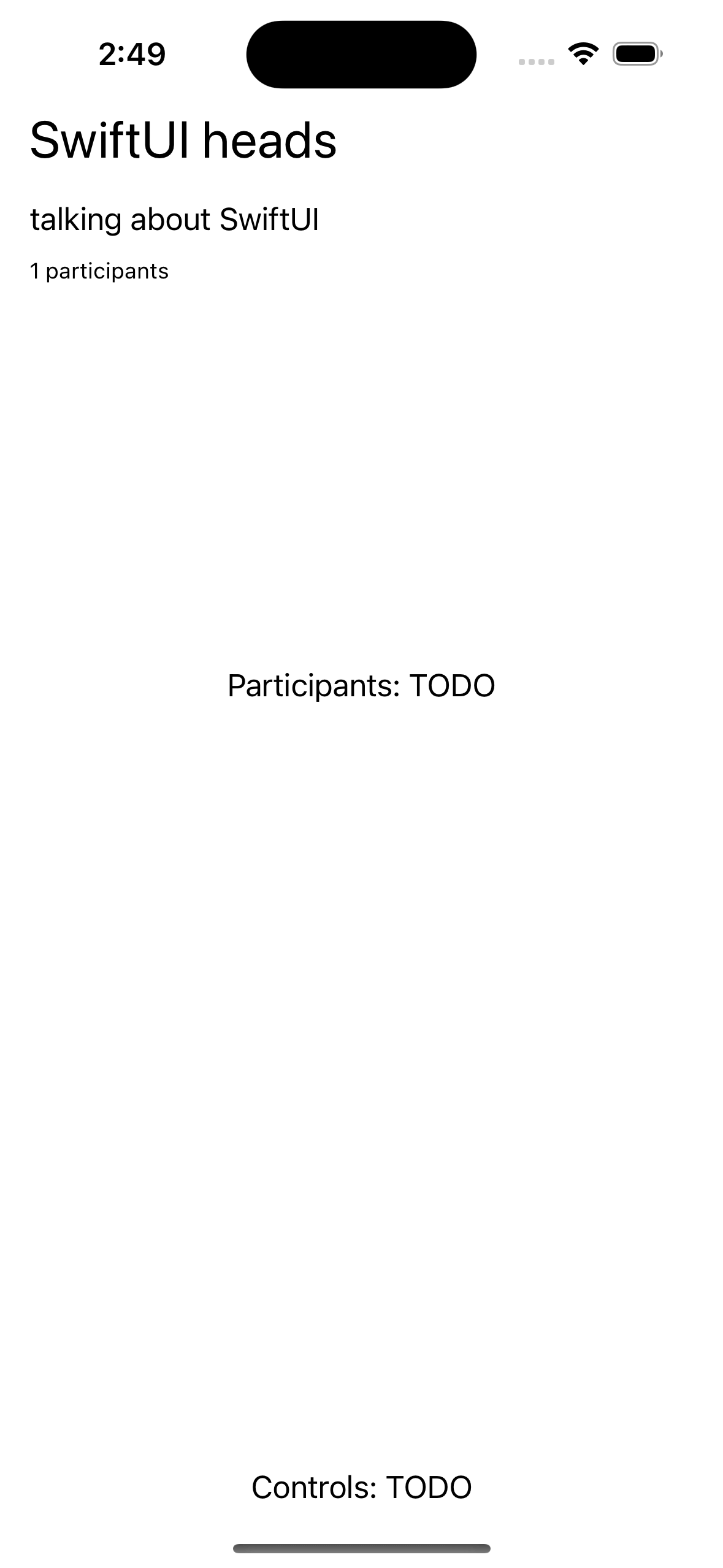

That's it for the basics. Here's how the app Scene should look like now.

1234567891011121314151617181920212223242526272829303132333435363738var body: some Scene { WindowGroup { VStack { if callCreated { DescriptionView( title: call.state.custom["title"]?.stringValue, description: call.state.custom["description"]?.stringValue, participants: call.state.participants ) ParticipantsView( participants: call.state.participants ) Spacer() ControlsView(call: call, state: state) } else { Text("loading...") } }.task { Task { guard !callCreated else { return } try await call.join( create: true, options: .init( members: [ .init(userId: "john_smith"), .init(userId: "jane_doe"), ], custom: [ "title": .string("SwiftUI heads"), "description": .string("talking about SwiftUI") ] ) ) callCreated = true } } } }

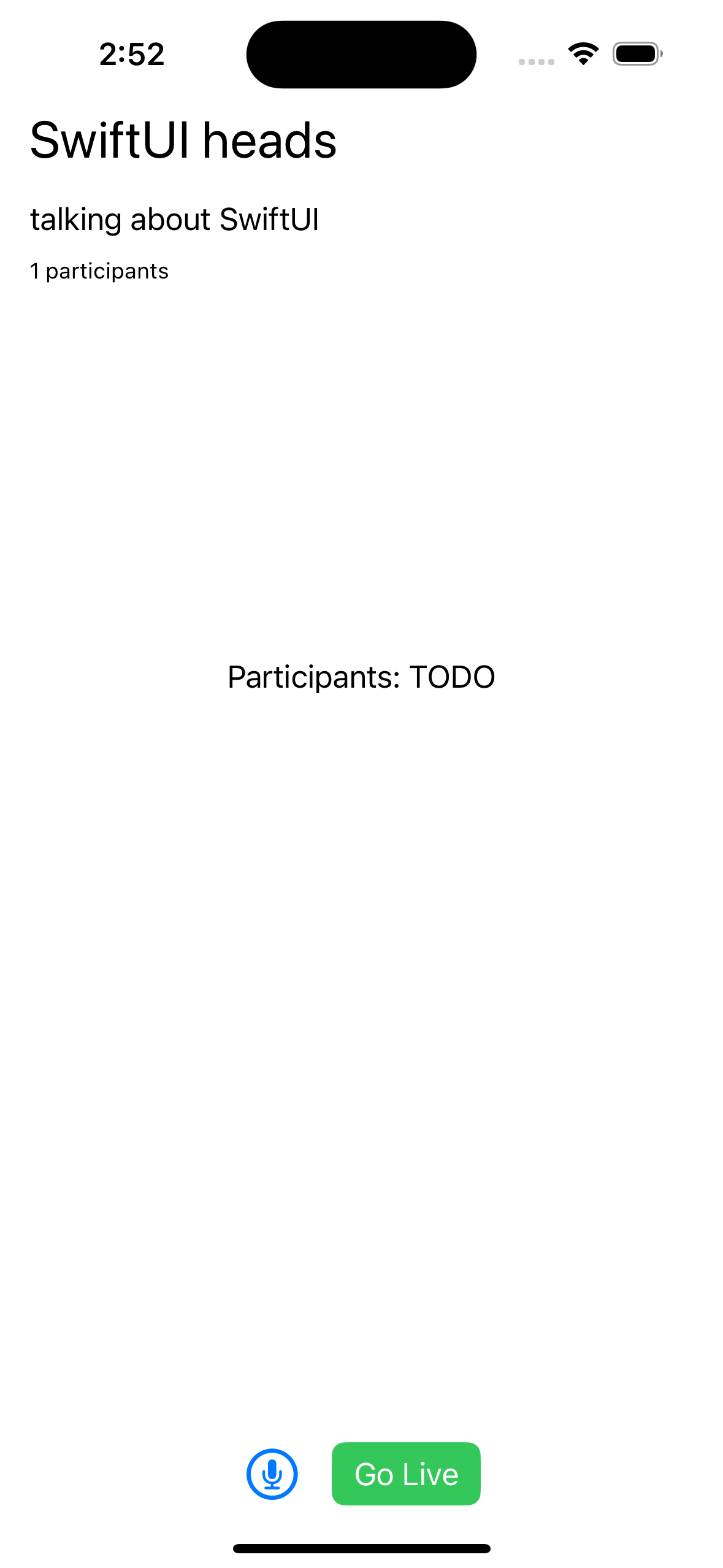

If you run the app now, it should look like this.

The approach is the same for all components. We observe call.state published variables, such as call.state.participants, to determine the call's states and use them to power our UI.

Let's join the audio room from the browser to make this a little more interactive.

Backstage & Live Mode Control

As you probably noticed by opening the same room from the browser, audio rooms are not live by default. Regular users can only join an audio room when it is in live mode. Let's expand the ControlView and add a button that controls the backstage or the room.

1234567891011121314import SwiftUI import StreamVideo struct MicButtonView: View { var body: some View { Button { Task { print("handle mic tap") } } label: { Label("", systemImage: "mic.circle").font(.title) } } }

1234567891011121314151617181920212223242526272829import SwiftUI import StreamVideo struct LiveButtonView: View { var call: Call @ObservedObject var state: CallState var body: some View { if state.backstage { Button { Task { try await call.goLive() } } label: { Text("Go Live") } .buttonStyle(.borderedProminent).tint(.green) } else { Button { Task { try await call.stopLive() } } label: { Text("Stop live") } .buttonStyle(.borderedProminent).tint(.red) } } }

1234567891011121314import SwiftUI import StreamVideo struct ControlsView: View { var call: Call @ObservedObject var state: CallState var body: some View { HStack { MicButtonView() LiveButtonView(call: call, state: state) } } }

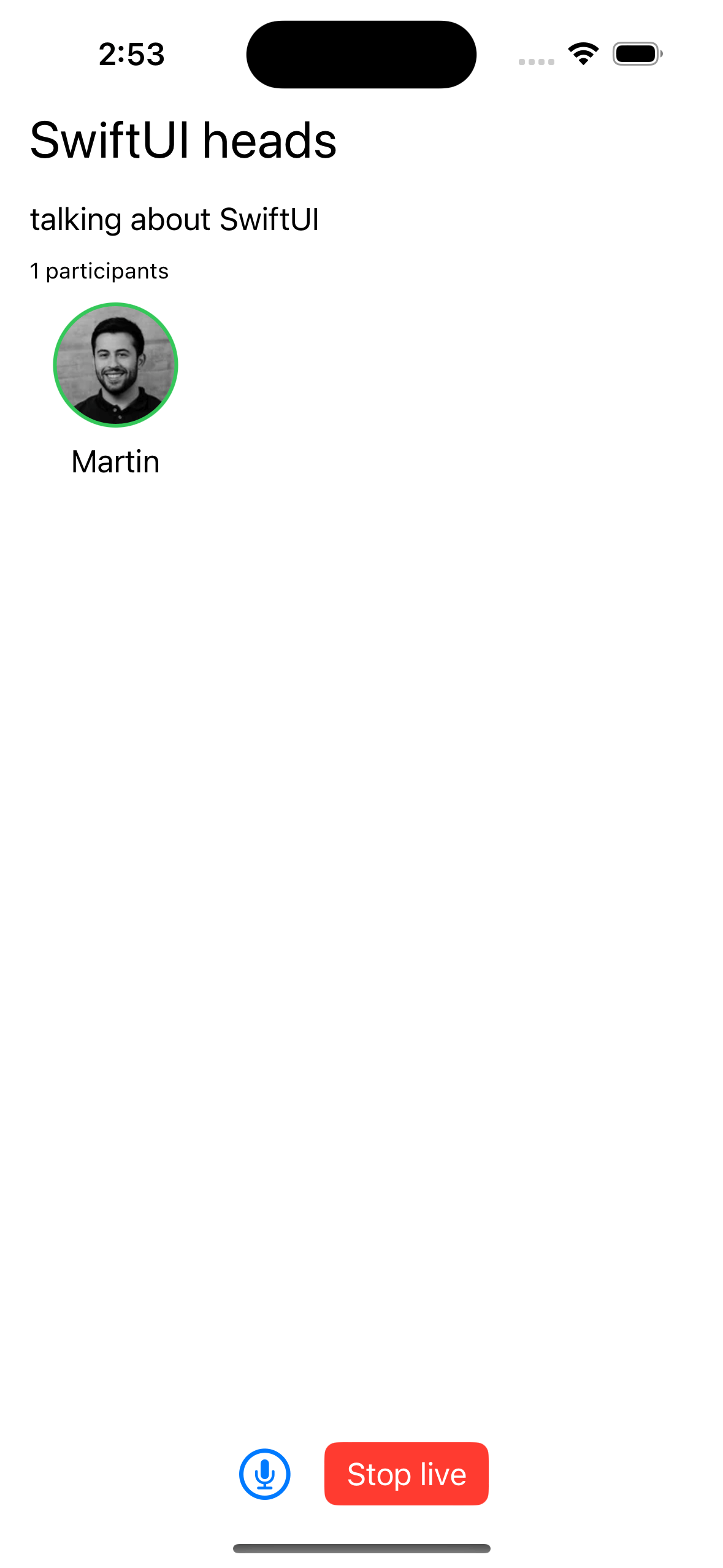

Now, the app exposes a fake mic control button (more on that later) and a button to toggle live mode on/off. If you try the web demo of the audio room, you should be able to join as a regular user.

List Participants

As a next step, let's render the actual list of participants and show an indicator when they are speaking. To do this, we will create a ParticipantView and render it from the ParticipantsView.

12345678910111213141516171819202122232425262728import SwiftUI import StreamVideo struct ParticipantView: View { var participant: CallParticipant var body: some View { VStack{ ZStack { Circle() .fill(participant.isSpeaking ? .green : .white) .frame(width: 68, height: 68) AsyncImage( url: participant.profileImageURL, content: { image in image.resizable() .aspectRatio(contentMode: .fit) .frame(maxWidth: 64, maxHeight: 64) .clipShape(Circle()) }, placeholder: { Image(systemName: "person.crop.circle").font(.system(size: 60)) } ) } Text("\(participant.name)") } } }

1234567891011121314import SwiftUI import StreamVideo struct ParticipantsView: View { var participants: [CallParticipant] var body: some View { LazyVGrid(columns: [GridItem(.adaptive(minimum: 100))], spacing: 20) { ForEach(participants) { ParticipantView(participant: $0) } } } }

With these changes, things get more interesting. The app now shows a list of all participants connected to the call and displays a small circle next to the ones who are speaking.

Step 5 - Go live and join from the browser

If you join the call from the browser, the participant list will now update as you open and close the browser tab.

Note how the web interface won't let you share your audio/video. By default, the audio_room call type only allows moderators or admins to speak. Regular participants can request permission. If different defaults make sense for your app, you can edit the call type in the dashboard or create your own.

Step 6 - Requesting permission to speak

Requesting permission to speak is easy. Let's first have a quick look at how the SDK call object exposes this.

Requesting Permission To Speak

1let response = try await call.request(permissions: [.sendAudio])

Handling Permission Requests

Permission requests are exposed from call.state on the permissionRequests published variable.

1234567if let request = call.state.permissionRequests.first { // reject it request.reject() // grant it try await call.grant(request: request) }

Let's add another view that shows the last incoming request as well as the buttons to grant or reject it.

12345678910111213141516171819202122232425import SwiftUI import StreamVideo struct PermissionRequestsView: View { var call: Call @ObservedObject var state: CallState var body: some View { if let request = state.permissionRequests.first { HStack { Text("\(request.user.name) requested to \(request.permission)") Button { Task { try await call.grant(request: request) } } label: { Label("", systemImage: "hand.thumbsup.circle").tint(.green) } Button(action: request.reject) { Label("", systemImage: "hand.thumbsdown.circle.fill").tint(.red) } } } } }

Here is the updated Scene code that includes it.

123456789101112131415161718192021222324252627282930313233343536373839var body: some Scene { WindowGroup { VStack { if callCreated { DescriptionView( title: call.state.custom["title"]?.stringValue, description: call.state.custom["description"]?.stringValue, participants: call.state.participants ) ParticipantsView( participants: call.state.participants ) Spacer() PermissionRequestsView(call: call, state: state) ControlsView(call: call, state: state) } else { Text("loading...") } }.task { Task { guard !callCreated else { return } try await call.join( create: true, options: .init( members: [ .init(userId: "john_smith"), .init(userId: "jane_doe"), ], custom: [ "title": .string("SwiftUI heads"), "description": .string("Talking about SwiftUI") ] ) ) callCreated = true } } } }

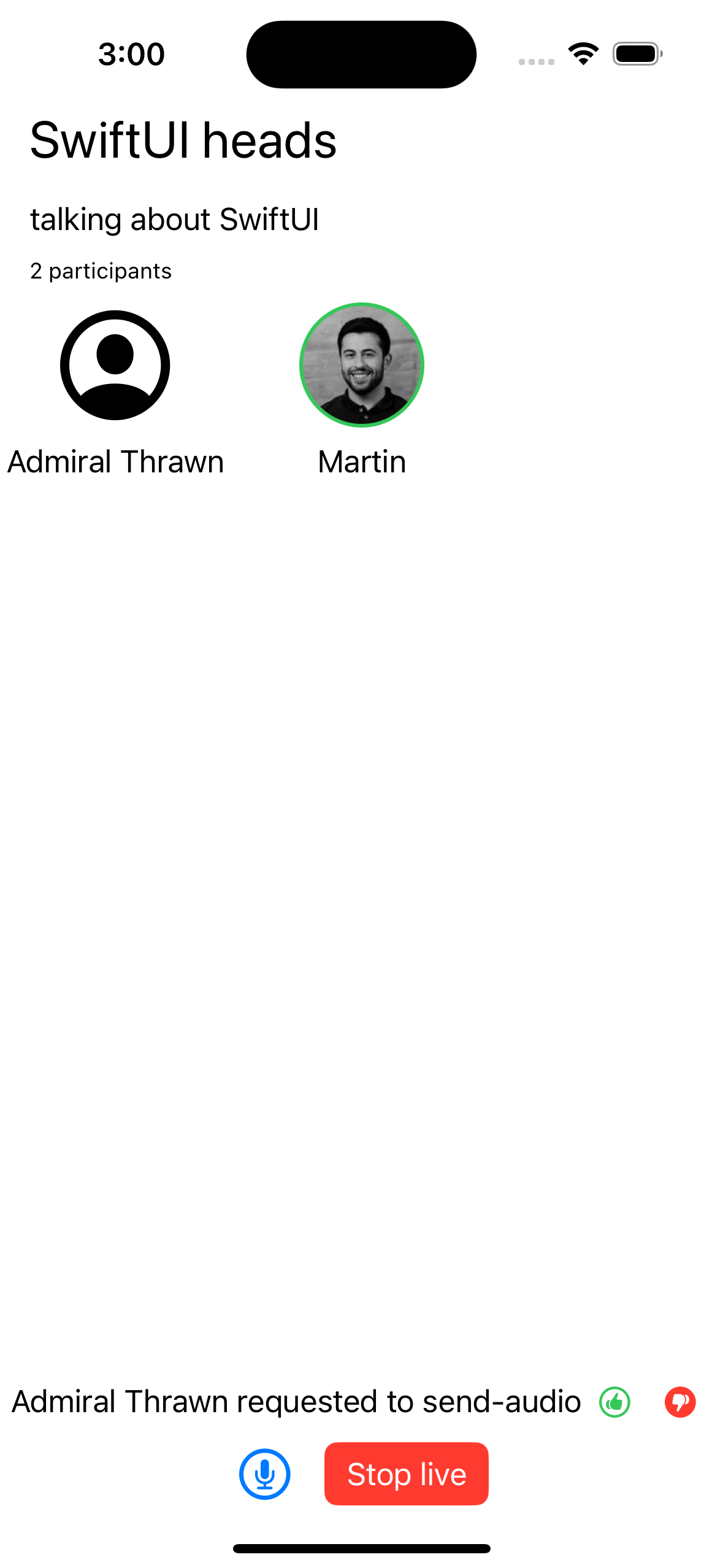

Now when a user requests access to speak the app will look like this.

Step 7 - Add a Microphone Control

You can read & manage the microphone status from the call.microphone published variable.

1234567891011/// Read the microphone's status let isEnabled = call.microphone.status == .enabled /// Toggle between enabled/disabled microphone status. try await call.microphone.toggle() /// Enable the microphone. try await call.microphone.enable() /// Disable the microphone. try await call.microphone.disable()

Let's update the MicButtonView with microphone handling.

123456789101112131415161718import SwiftUI import StreamVideo struct MicButtonView: View { @ObservedObject var microphone: MicrophoneManager var body: some View { Button { Task { try await microphone.toggle() } } label: { Image(systemName: microphone.status == .enabled ? "mic.circle" : "mic.slash.circle") .foregroundColor(microphone.status == .enabled ? .red : .primary) .font(.title) } } }

We can now pass the microphone ObservedObject from the ControlsView.

1234567891011121314import SwiftUI import StreamVideo struct ControlsView: View { var call: Call @ObservedObject var state: CallState var body: some View { HStack { MicButtonView(microphone: call.microphone) LiveButtonView(call: call, state: state) } } }

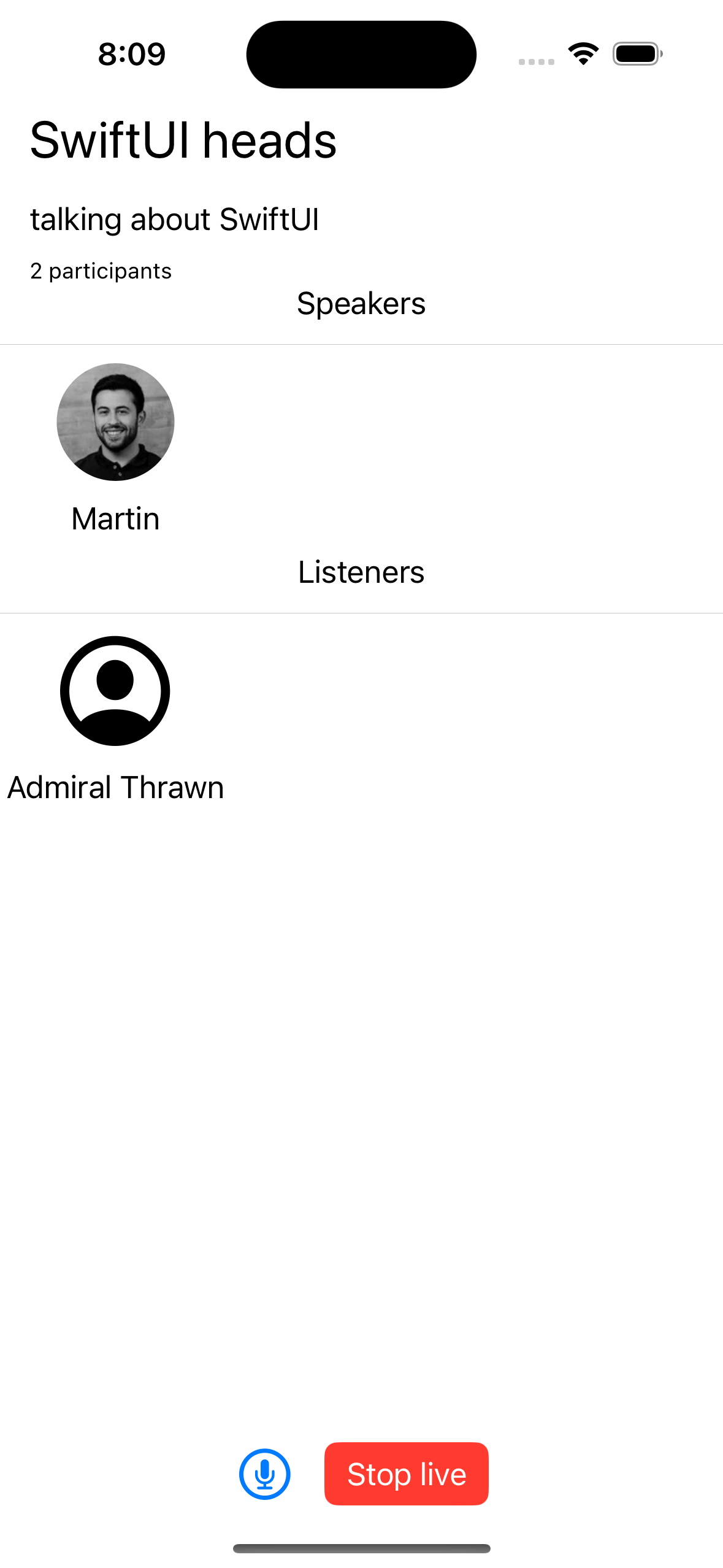

Step 8 - Render Group Participants

It is common for audio rooms and similar interactive audio/video experiences to show users in separate groups. Let's see how we can update this application to render participants in two separate sections: speakers and listeners.

Building custom layouts is very simple. All we need to do is apply some filtering to the call.participants observable.

12345678// a list of participants, by default this is list is ordered by the ID of the user call.state.participants // Speakers: participants that have an audio track (ie. are allowed to speak and have a mic configured) call.state.participants.filter { $0.hasAudio } // Listeners: participants that do not have an audio track call.state.participants.filter { !$0.hasAudio }

We already have a view to display participants so all we need to do is to create another one. Here's how the scene body looks.

1234567891011121314151617181920212223242526272829303132333435363738394041424344454647var body: some Scene { WindowGroup { VStack { if callCreated { DescriptionView( title: call.state.custom["title"]?.stringValue, description: call.state.custom["description"]?.stringValue, participants: call.state.participants ) Text("Speakers") Divider() ParticipantsView( participants: call.state.participants.filter {$0.hasAudio} ) Text("Listeners") Divider() ParticipantsView( participants: call.state.participants.filter {!$0.hasAudio} ) Spacer() PermissionRequestsView(call: call, state: state) ControlsView(call: call, state: state) } else { Text("loading...") } }.task { Task { guard !callCreated else { return } try await call.join( create: true, options: .init( members: [ .init(userId: "john_smith"), .init(userId: "jane_doe"), ], custom: [ "title": .string("SwiftUI heads"), "description": .string("Talking about SwiftUI") ] ) ) try await call.sendReaction(type: "raise-hand", custom: ["mycustomfield": "hello"], emojiCode: ":smile:") callCreated = true } } } }

Explore Other Built-in Features

There are a few more exciting features that you can use to build audio rooms.

- Query Calls: You can query calls to easily show upcoming calls, calls that recently finished as well as call previews.

- Reactions & Custom events: Reactions and custom events are supported.

- Recording & Broadcasting: You can record your calls, Stream's Chat SDKs are fully featured, and you can integrate them in the call.

- Moderation: Moderation capabilities are built-in into the product.

- Transcriptions: Transcriptions aren't available yet, but they are due to launch soon.

Recap

It was fun to see how quickly you can build an audio-room for your app. Please let us know if you ran into any issues. Our team is also happy to review your UI designs and offer recommendations on how to integrate them with Stream.

Let's recap what we've learned.

- You set up a call with

call = client.call(callType: "audio_room", callId: "123"). - The call type

audio_roomcontrols which features are enabled and how permissions are set up. - The

audio_roomby default enables backstage mode, and only allows admins and the creator of the call to join before the call goes live. - When you join a call, realtime communication is set up for audio & video calling with

call.join(). - State objects in

call.stateandcall.state.participantsmake it easy to build your custom UIs. - All calls run on Stream's Global Edge Network of video servers. Being closer to your users improves the latency and reliability of calls. For audio rooms, we use Opus RED and Opus DTX for optimal audio quality.

The SDKs enable you to build audio rooms, video calling and livestreaming in days.

We hope you've enjoyed this tutorial. Please feel free to reach out if you have any suggestions or questions. You can find the code for this tutorial in this GitHub repository.

Final Thoughts

This is one our video/voice app tutorials. We built a fully functioning audio room app with our iOS SDK components library. We also showed how easy it is to customize behavior and styles of the audio room app with minimal code changes.

Both the Video SDK for iOS and the API have more features supporting advanced use-cases.