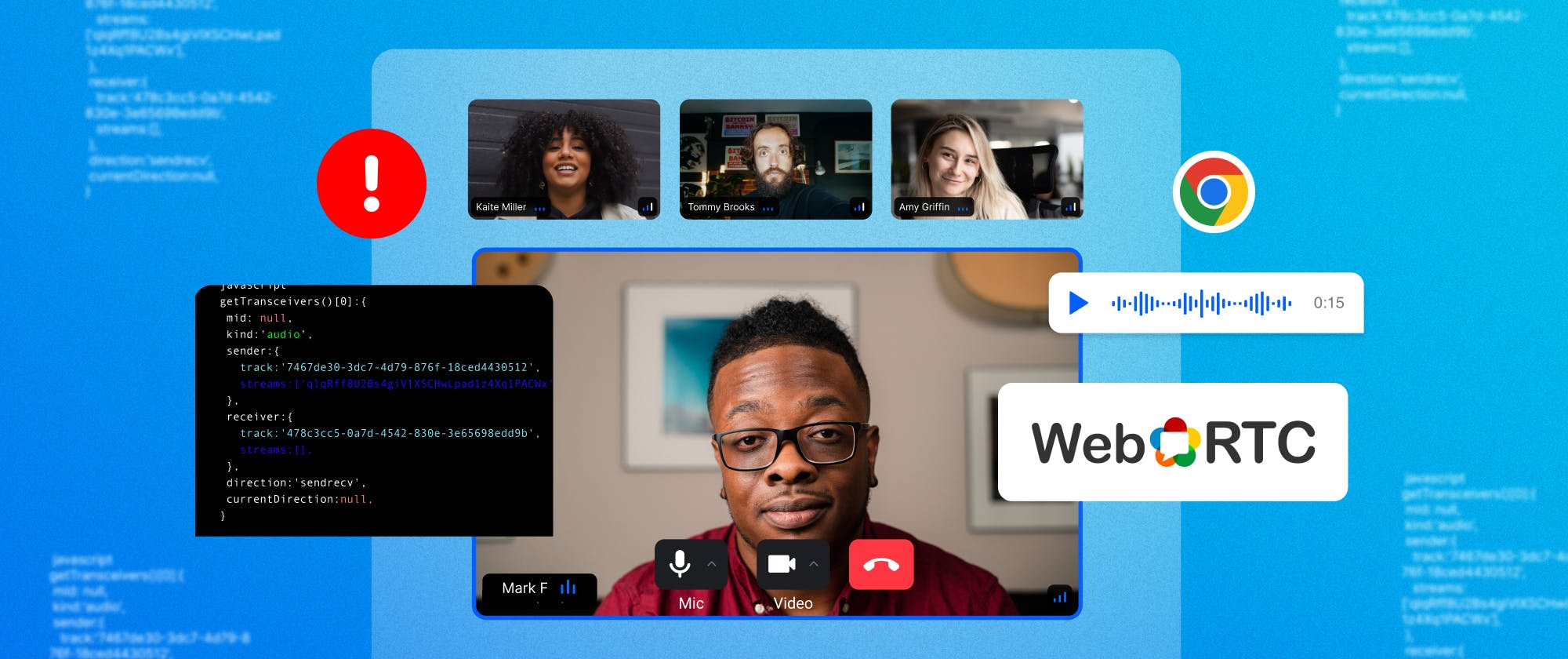

WebRTC is an open-source technology that provides real-time communication capabilities for web applications and is designed to work with the latest web technologies. With the help of WebRTC, it is now possible to create high-quality and low-latency video, audio, and data-sharing applications that run directly in a web browser without the need for any plugins or additional software.

When building our global edge network for video calling, we experienced the pains of WebRTC debugging and wanted to discuss how Google Chrome’s WebRTC Internal Tool debugs WebRTC calls.

Chrome’s WebRTC Internal Tool

Google's Chrome (version 87 or higher) WebRTC internal tool is a suite of debugging tools built into the Chrome browser.

The Chrome WebRTC internal tool is the ability to view real-time information about the media streams in a WebRTC call. Details regarding the video and audio tracks, the codecs utilized, and the stream's general quality are all included in this data. This knowledge can be very helpful for resolving problems with poor audio and video quality.

The internal Chrome WebRTC tool also offers details on the network configurations utilized in a WebRTC call, such as the STUN/TURN servers and ICE candidates. When trying to solve connectivity problems, like when one side can't connect to the other, this information can be quite helpful.

Here are some examples of issues that can be debugged using this tool:

-

The internal tool can assist in determining the root cause of network connectivity issues, such as a firewall or NAT traversal issues, which can block WebRTC communication.

-

The tool can assist in the diagnosis of signaling-related difficulties, such as issues with offer/answer negotiations, ICE candidate exchange, and connection establishment.

-

The tool offers real-time statistics on audio and video quality, including packet loss, delay, and jitter, which can be used to locate and resolve problems affecting the media streams' quality.

-

The tool can be used to find WebRTC performance issues, such as high CPU or network utilization, which may have an effect on the user experience.

Visit the following chrome://webrtc-internals in a new tab to launch the WebRTC internal tool in the Chrome browser.

The above page displays the troubleshooting information for currently active WebRTC sessions. We'll go into more detail about how to use this information to address the issue in the next section of this article.

Overview of the WebRTC-Internals API

To begin troubleshooting WebRTC using the internal tool offered by Chrome, a peer-to-peer connection must be created first.

Here, as seen in the following image, we are setting up a peer-to-peer connection to demonstrate the Basic peer connection demo in a single from the webRTC samples repository.

The webrtc-internals allow the download of statistics and peer connection updates in a huge JSON format, as seen in the following image.

Download this document dump file for a more detailed and extensive walkthrough.

We can inspect the various components of a WebRTC call and view real-time information about the media streams, peer-to-peer data transfers, and network configurations by visiting the following chrome://webrtc-internals as shown in the below image.

getUserMedia API Trace

MediaStream is also known as the getUserMedia API in WebRTC, which allows web applications to access a user's camera and microphone to capture audio and video streams in real-time.

When the getUserMedia API trace result is inspected, it returns getUserMedia call and getUserMedia result.

getUserMedia call provide information on whether the active peer connection's audio and video are connected.

getUserMedia result Return the information about the ongoing stream Audio with the method getAudioTracks() and Video Track using the method getVideoTracks() with the ids, and default label info like External Microphone (Built-in) for Audio and FaceTime HD Camera (Built-in) for the Video as shown in the following image.

RTCPeerConnection API Trace

The RTCPeerConnection API provides access to the different methods and events during the peer-to-peer connection. These events and activities provide information regarding the connection state. The following image shows the API trace for the RTCPeerConnection event and methods.

By using these events and methods, we will drive analysis to troubleshoot the issues. Here, we'll go through a few of the processes and actions involved in an active connection.

transceiverAdded: It gives information about the audio and video tracks through the peer connection. This approach allows us to determine whether the tracks are present or not.

1234567891011121314getTransceivers()[0]:{ mid: null, kind:'audio', sender:{ track:'7467de30-3dc7-4d79-876f-18ced4430512', streams:['qlqRff8U2Bs4giVlXSCHwLpad1z4Xq1PACWx'], }, receiver:{ track:'478c3cc5-0a7d-4542-830e-3e65698edd9b', streams:[], }, direction:'sendrecv', currentDirection:null, }

createOffer: This approach demonstrates our want to receive audio, video, and IceRestart for more details.

123createOffer options: {offerToReceiveVideo: 1, offerToReceiveAudio: 1, voiceActivityDetection: true, iceRestart: false}

createOfferOnSuccess: This method shows the results of the createOffer event including the type

setLocalDescription: This is a method in WebRTC that sets the local session description for a peer connection. and show the type and SDP used in the setLocalDescription call.

setLocalDescriptionOnSuccess: This callback shows the results of the setLocalDescription.

signalingstatechange: This is an event in WebRTC that is triggered when the signaling state of a peer connection changes. The signaling state represents the current state of the WebRTC negotiation process and can have different values such as "stable," "have-local-offer", and "have-remote-offer".

Icegatheringstatechange: is an event in WebRTC that is triggered when the ICE (Interactive Connectivity Establishment) gathering state of a peer connection changes.

setRemoteDescription: This is a method in WebRTC that sets the remote session description for a peer connection.

setRemoteDescriptionOnSuccess: This callback shows the results of the setRemoteDescription event.

Connectionstatechange: This is an event that is fired by WebRTC whenever the state of the connection between two peers changes. The possible connection states are "new," "connecting," "connected," "disconnected," "failed," and "closed."

WebRTC offers this same data in the form of graphs where the variation of each metric is seen over a timeline and displays the relevant information, such as network and media performance metrics. For example, when the packet loss starts growing, the bandwidth decreases or the latency increases,

Conclusion

Google's Chrome WebRTC internal tool provides developers with a powerful suite of debugging tools for troubleshooting WebRTC-related issues. The ability to view real-time information about the media streams, peer-to-peer data transfer, and network configurations can be incredibly valuable in resolving problems related to poor video and audio quality, network connectivity, and more. If you're working on a WebRTC project, be sure to take advantage of this valuable tool.

If you would like to avoid the hassle of building a WebRTC solution from scratch, check out Stream’s upcoming video calling API.