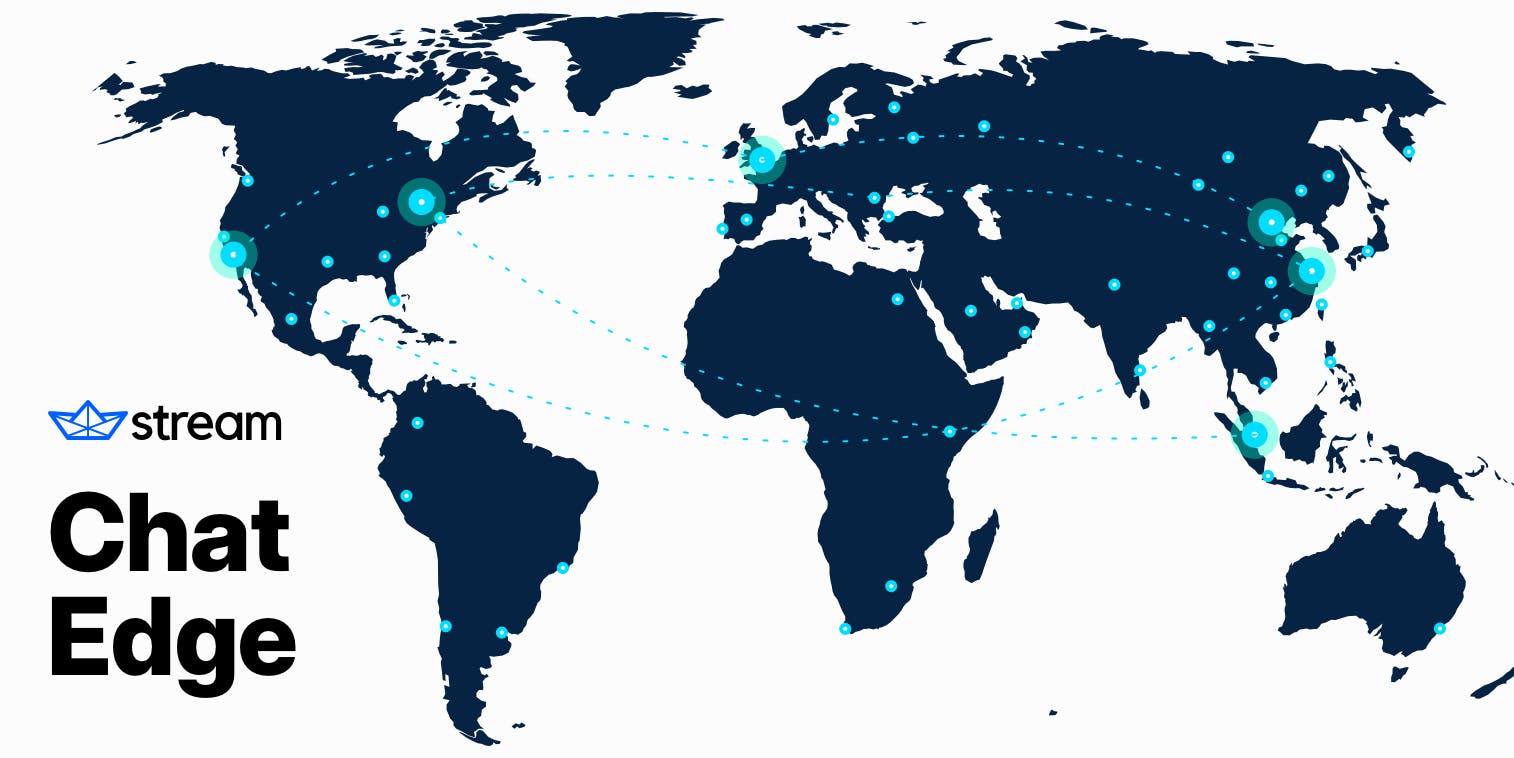

Today's digital world has gone global—app users expect the ability to communicate with those on the other side of the world as easily as they can with a local user. When they experience delays in real-time communication, it can hurt UX and negatively impact metrics like user engagement, satisfaction, and churn. Your app needs an API provider that can guarantee low-latency communication for users. Stream's Edge Infrastructure is a high-performing solution for customers using any one of our APIs to efficiently connect and serve users across multiple continents.

Edge computing relies on a globally distributed server framework that enhances data processing by bringing devices closer to the source, boosting performance, reliability, and unlimited app scalability.

Unlike other messaging services hosted on traditional cloud infrastructure, our Edge network enables API and SDK performance to not have to rely on users' proximity to regional data centers. Instead, we have servers located around the world, which reduces latency by up to five times and eliminates timeout errors caused by poor WiFi or mobile connectivity.

As the first chat API and SDK provider to offer this technology, Stream is uniquely equipped to power in-app messaging for the world's largest enterprise organizations, with limitless scalability, 99.999% uptime, and <9ms API latency globally.

Let's take a closer look at some of the architectural challenges this approach solves, how our team approached the transition process, and why we believe edge computing is the future of cloud infrastructure.

Top 3 Challenges of Traditional Cloud Infrastructure

The cloud and SaaS revolutions changed how we think about developing and delivering apps to users, paving the way for faster iteration and massive performance and overall functionality improvements. Users expect seamless, instantaneous responses from their apps, but in certain situations, geographic distance and physical infrastructure limitations can cause perceptible latency.

The problem boils down to a couple of factors:

- The internet is slow, and the further your data needs to travel, the higher the latency.

- TLS, CORS, and TCP over poor connections compound and create a multiplication problem.

1. Connecting Users Across Multiple Continents

With a traditional approach to cloud infrastructure, you choose the regional data center for your API servers closest to most of your users. But if even a few of those users are located on a different continent, the need to communicate with this single location can add hundreds of milliseconds to load times for those users. Some Stream Chat customers, for example, have end users spread across the US, the EU, and Australia, making it difficult to select a single infrastructure region suitable for all users.

2. Balancing Security and Performance

Meeting and maintaining security comes at a cost---a negative impact on latency. All Stream Chat traffic uses TLS encryption to ensure security and privacy for end users and to achieve regulatory compliance within several different industries. While this is great for security, it significantly impacts latency. Here's an overview of some of the inherent latency challenges:

- The first request needs to perform a TLS handshake; this requires four full round trips. If the base latency is 100ms, this approach means a total of 400ms to get things started.

- CORS — Before your browser makes an API request, a preflight request ensures the call is authorized. This adds another full round trip to the latency of the first API call. (This is a web-only issue.)

If latency is not optimized to compensate for a higher level of security, your app users will immediately see the impact of TLS on their user experience.

3. Mitigating Connectivity Issues

Even today, millions of end users don't have access to consistently reliable high-speed internet, whether via wired broadband or mobile networks. Connectivity can vary based on socioeconomic factors, urban/rural divides, and even minute-to-minute location changes depending on mobile network coverage.

The best apps are developed to accommodate intermittent connectivity issues with minimal effect on the user experience. With in-app chat, this often means support for offline messaging, allowing users to send messages and reactions and even create channels while they're offline. When the user comes back online, the library automatically recovers lost events and retries sending messages.

But even with offline messaging and other provisions like optimistic UI updates, the internet's Transmission Control Protocol (TCP) can create a latency multiplication problem when a user connects to the chat API using a poor WiFi connection or a spotty mobile connection. When a packet is dropped, TCP will require re-transmission. Depending on the error rate, this can quickly increase latency to the point that the API client will timeout, creating an unacceptable user experience.

The Edge Solution Explained

With the above challenges in mind, it's clear that an alternative to the traditional region-based approach is necessary to ensure consistently high performance and low latency for users around the globe. Our solution was to create a network of edge servers and combine DNS and BGP to route user traffic to the nearest edge.

In this setup, the TLS handshake, preflight requests, and TCP retransmissions happen between the client and an edge to ensure low latency instead of traversing a longer distance across more networks.

A common hack to achieve similar results is to encrypt traffic between edges and clients and transmit plain text traffic between edges. While this approach brings significant latency results, it also creates substantial security problems. Our solution relies on fully encrypted edge-edge channels, and we use persistent multiplexed HTTP/2 connections to achieve this without incurring latency penalties. Our mobile and web SDK libraries support HTTP/2 connections out of the box and use these connections without requiring any configuration change.

Performance Improvements by the Numbers

Stream's Edge infrastructure demonstrates remarkable performance improvements compared to traditional region-based infrastructure, with users located the furthest from a given regional data center benefitting the most. The examples below indicate latency reductions between 2x and 5x. Actual round-trip ping times will vary based on users' circumstances, but generally, apps with users on multiple continents should see impressive results.

Connect User Latency from Amsterdam to US East

| best | median | p95 | p99 | |

|---|---|---|---|---|

| Chat on Stream’s Edge Infrastructure | 165ms | 170ms | 327ms | 359ms |

| Competing Approach with Regional Proxy | 356ms | 362ms | 444.5ms | 1155ms |

Connect User Latency from Amsterdam to Mumbai

| best | median | p95 | p99 | |

|---|---|---|---|---|

| Chat on Stream’s Edge Infrastructure | 186ms | 193ms | 203ms | 219ms |

| Competing Approach with Regional Proxy | 502ms | 515ms | 526ms | 2197ms |

Connect User Latency from Amsterdam to Singapore

| best | median | p95 | p99 | |

|---|---|---|---|---|

| Chat on Stream’s Edge Infrastructure | 228ms | 232ms | 241ms | 255ms |

| Competing Approach with Regional Proxy | 660ms | 678ms | 693ms | 3112ms |

GDPR Compliance with Stream Edge Infrastructure

Many organizations that use Stream must demonstrate that their software products are GDPR compliant. Given the international distribution of our edge infrastructure, these organizations may have questions about how data storage works. Data is only stored in one region to meet the requirements of GDPR and similar regulatory frameworks. We're committed to ensuring that the Stream's APIs and SDKs always come with the tools you need to integrate fully compliant messaging functionality.

Boost the Trust & Reliability of Your App Today

The benefits of our edge network extend beyond real-time messaging over in-app chat to each of our available products. For Auto Moderation, we guarantee message flagging within 50ms. For live video, the edge network prevents lags and stutters for millions of attendees. For activity feeds, it means real-time scaling of activities across millions of follower relationships. Supercharge your user experience with maximum reliability with Stream's Edge Infrastructure.