Build multi-modal AI applications using our new open-source Vision AI SDK.

Open-source Python framework for voice and video AI agents. Real-time WebRTC, 25+ integrations, native tool calling and pluggable vision pipelines.

Works with Stream, Tencent RTC, local devices or any WebRTC infrastructure.

12345678910agent = Agent( edge=getstream.Edge(), agent_user=agent_user, instructions="Read @golf_coach.md", llm=gemini.Realtime(fps=10), processors=[ultralytics.YOLOPoseProcessor( model_path="yolo11n-pose.pt", device="cuda" )], )

An open source framework and developer platform for building, testing, deploying, scaling, and observing agents in production.

Build fast voice agents that reason over knowledge, search files, and respond in real time using low-latency RAG pipelines.

Power interactive real-time coaching flows with live pose tracking and frame-by-frame video processor pipelines.

Build virtual try-ons, stylized scenes, or give your agents a visual identity using models like Decart Lucy.

Train and run custom CV models with Roboflow or YOLO for security monitoring, moderation, and domain-specific workflows.

Connect external APIs so agents can validate data and take real-world actions, e.g., Twilio-based phone calls and fraud response, during live conversations.

Built for teams shipping real AI products. Every component is designed for horizontal scaling, observability, and zero-downtime deployments.

Stream audio and video frames directly to LLMs. Connects to Stream, Tencent RTC, local devices, or any standards-compliant WebRTC infrastructure.

Inject YOLO, Roboflow, or custom PyTorch/ONNX models directly into the real-time processing loop before or after the LLM call.

VAD, speaker diarization, and configurable smart turn-taking for natural, interruption-aware conversation flow.

Execute code and APIs mid-conversation. Connect Linear, Twilio, weather APIs, or any standard MCP server.

TurboPuffer vector search integration. Agents recall context across sessions via Stream Chat, persistent, queryable, real.

Inbound and outbound telephony via Twilio with bidirectional audio streaming. SIP trunking supported.

Built-in HTTP server with Prometheus metrics endpoints and stateless agent design for horizontal pod scaling.

Push silent instructions or context to a running agent during a live call. Useful for human-in-the-loop orchestration.

Bring your own edge: Stream, Tencent RTC, local devices, or any WebRTC-compatible network. Swap transports with a one-line config change.

Complex multi-modal use cases solved in dozens of lines, not thousands.

Vision Agents doesn't lock you to a single transport layer. Bring your own edge network: cloud, on-prem, or local. Swap providers with a one-line config change.

Any WebRTC-compatible network works. The Edge interface is open: implement it for your own infrastructure in <50 lines.

Cloud

Stream's globally distributed WebRTC infrastructure. Sub-500ms join, auto-scaling TURN/STUN, 333k free participant-minutes/month for developers.

>_ edge=getstream.Edge()

Cloud

Tencent's real-time communication network with strong coverage across Asia-Pacific. Drop-in replacement using the same Agent API.

>_ edge=tencent.Edge()

On Prem

Run entirely on local devices or private infrastructure. Ideal for air-gapped deployments, on-device inference, or custom WebRTC servers.

>_ edge=local.Edge(host=...)

OpenAI, Gemini, xAI (Grok), OpenRouter, HuggingFace, Kimi AI, AWS Nova

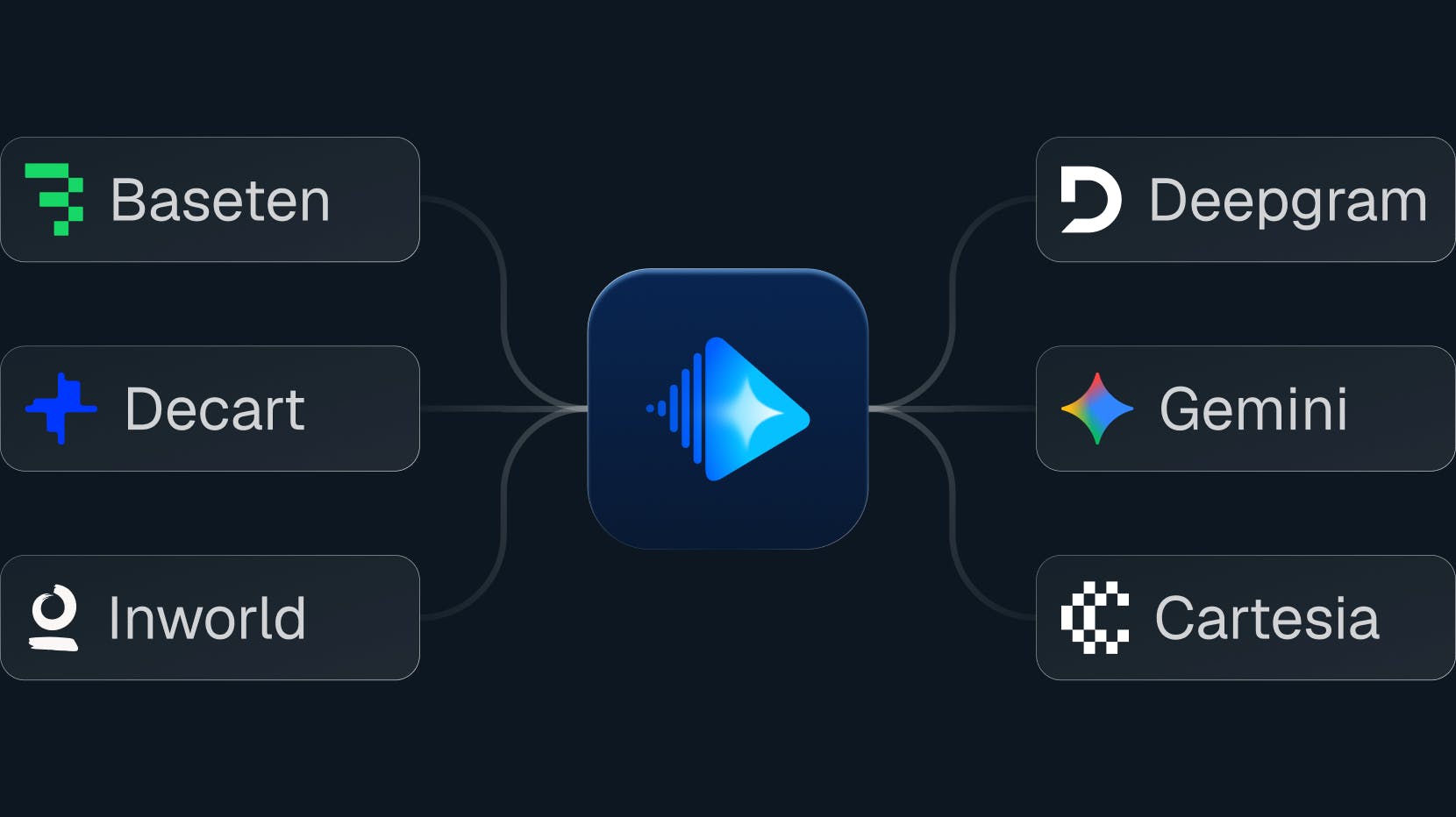

ElevenLabs, Deepgram, AWS Polly, Cartesia

YOLO (Ultralytics), Roboflow, Custom PyTorch, ONNX Runtime

Stream, Tencent RTC (Coming Soon), Local Devices, Twilio, Kubernetes, Prometheus, TurboPuffer

Install the framework and connect to Stream, Tencent RTC, local devices, or any WebRTC-compatible network. No vendor lock-in. MIT license.

Follow Stream on X, star the Vision Agents GitHub repo, and join the discussion on Discord to try demos, share feedback, and contribute.