Learn how to quickly integrate rich Generative AI experiences directly into Stream Chat. Learn More

Rapidly ship in-app messaging with feature-rich SDKs backed by a global edge network to reduce latency and improve in-app conversion, engagement, and retention.

Rapidly ship in-app messaging with our highly reliable chat infrastructure and feature-rich SDKs. Improve your overall in-app conversion, engagement, and retention.

Industry-leading enterprises create safe and engaging in-app chat experiences with Stream, powering feature-rich, low-latency connections that increase a sense of community.

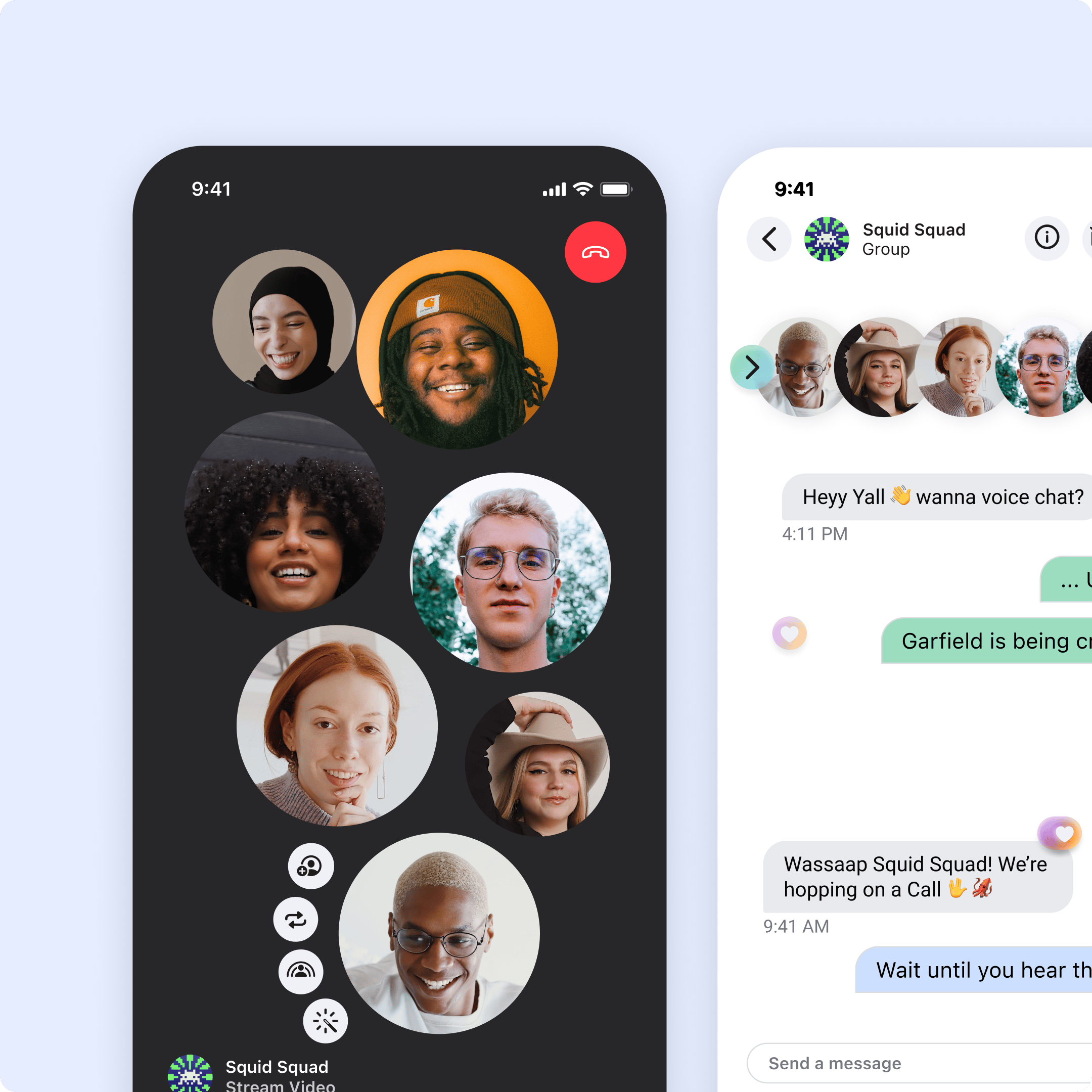

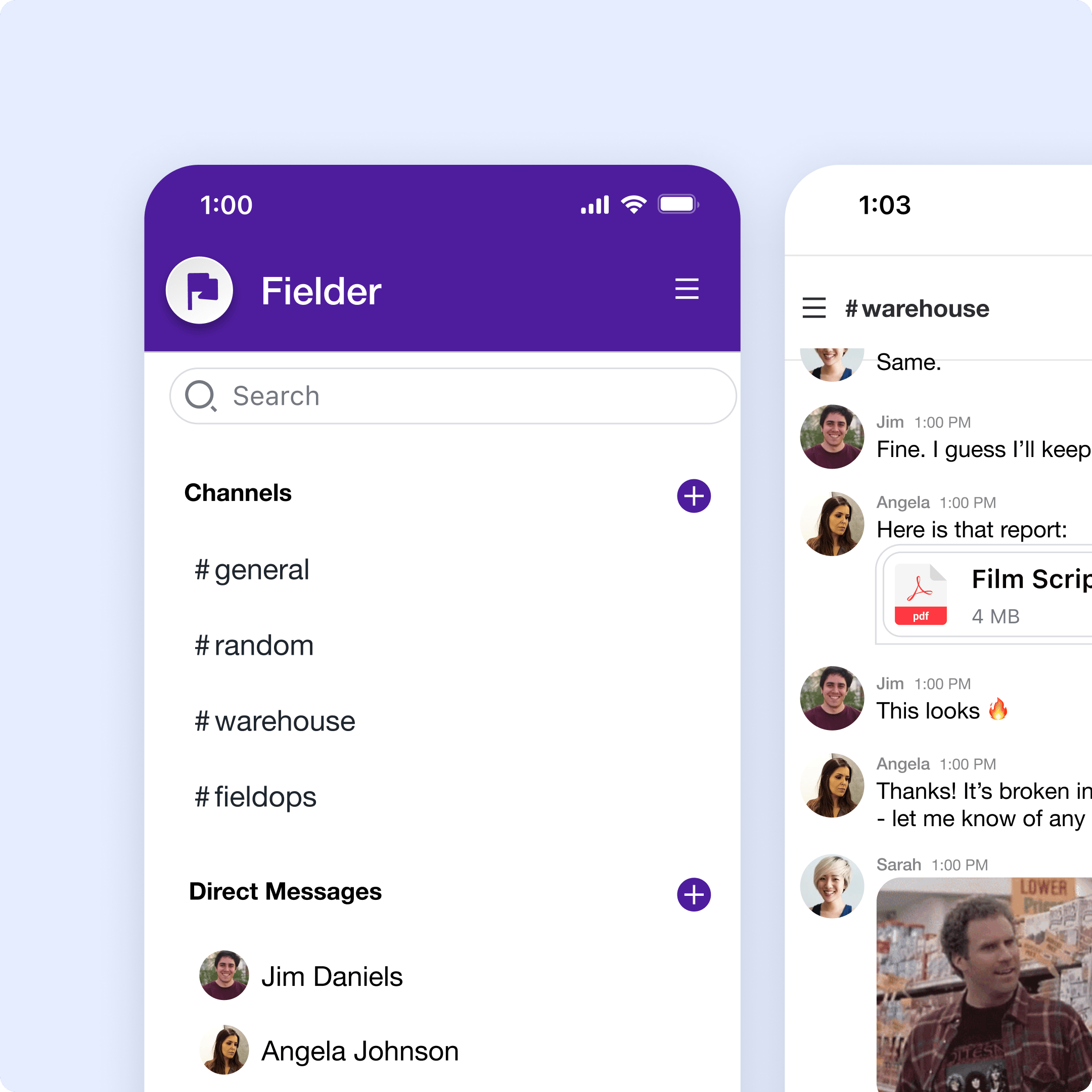

Ship in-app chat experiences with all the features your users expect.

Want to learn more?

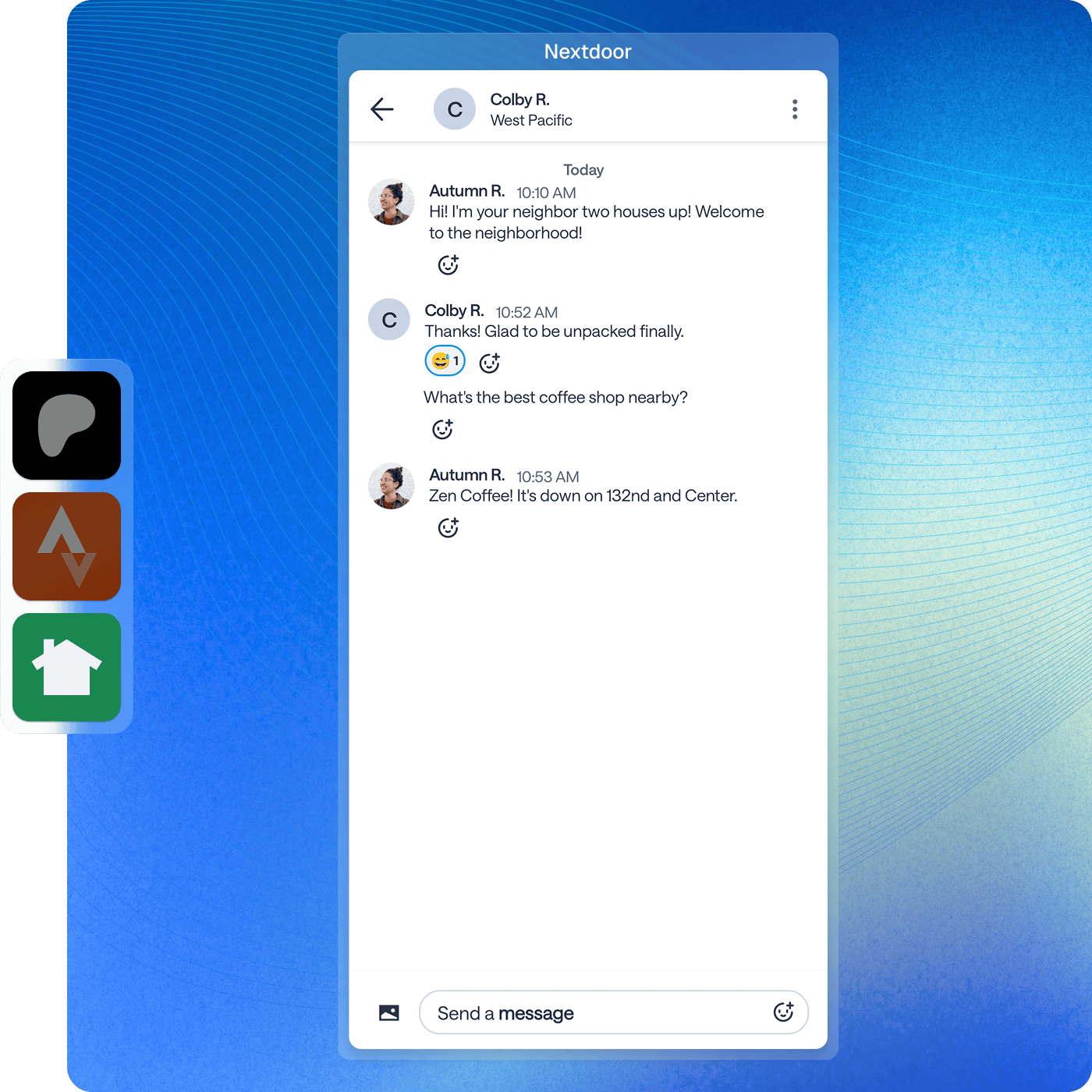

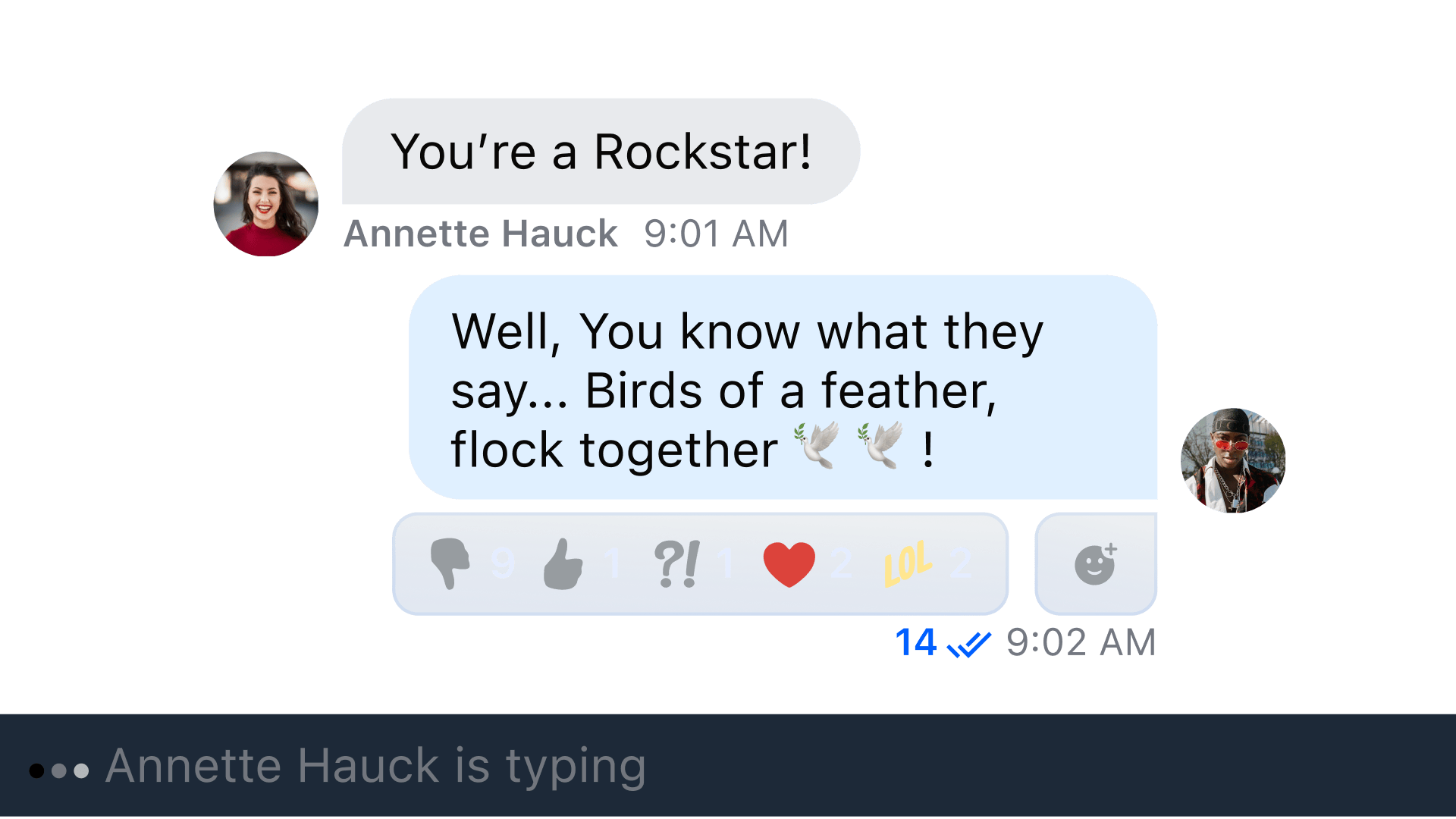

Flexibility to implement reactions to messages just like you see with Slack, Facebook, or iMessage.

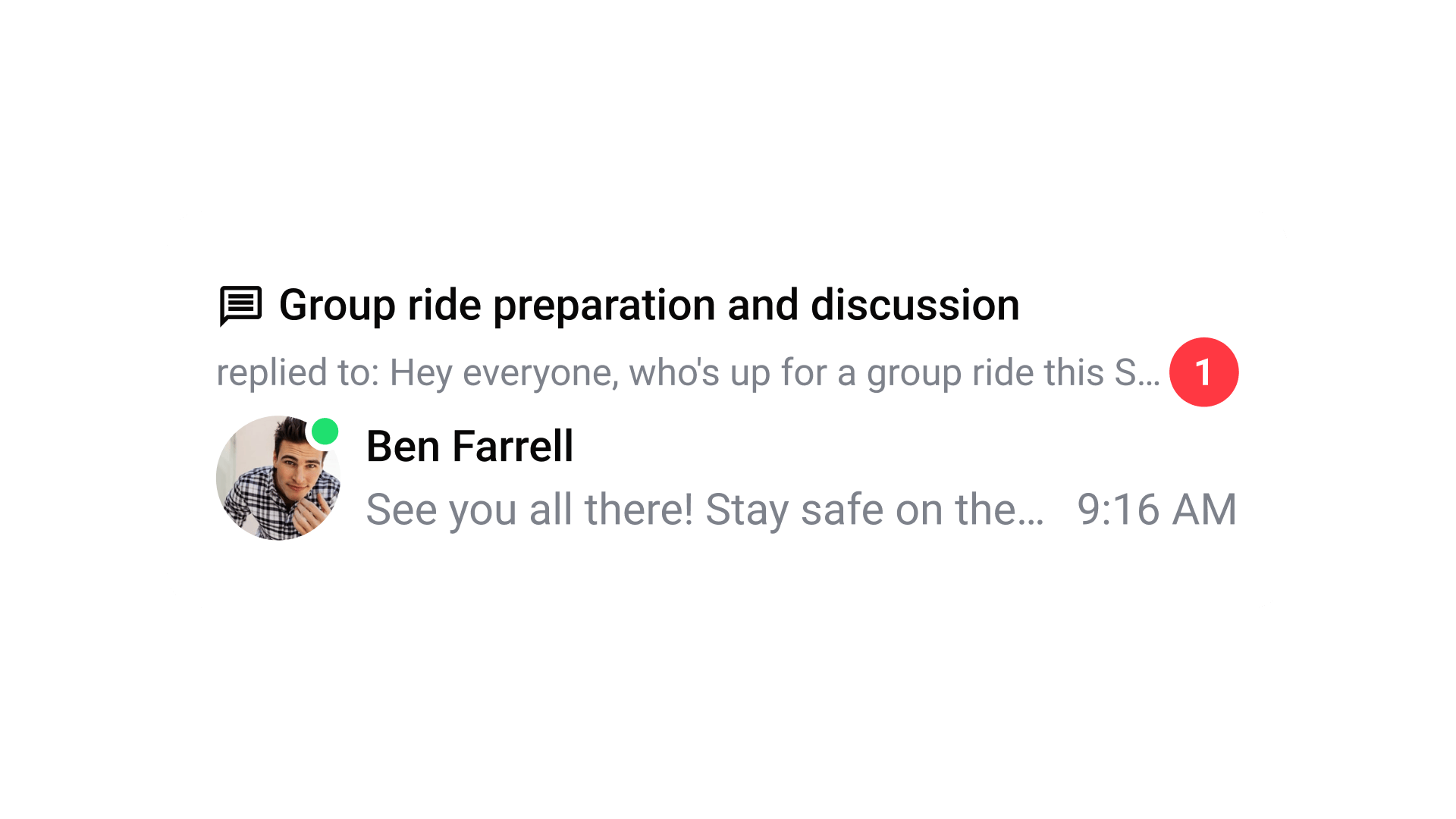

Improve the user experience by visually grouping chat messages within their replies.

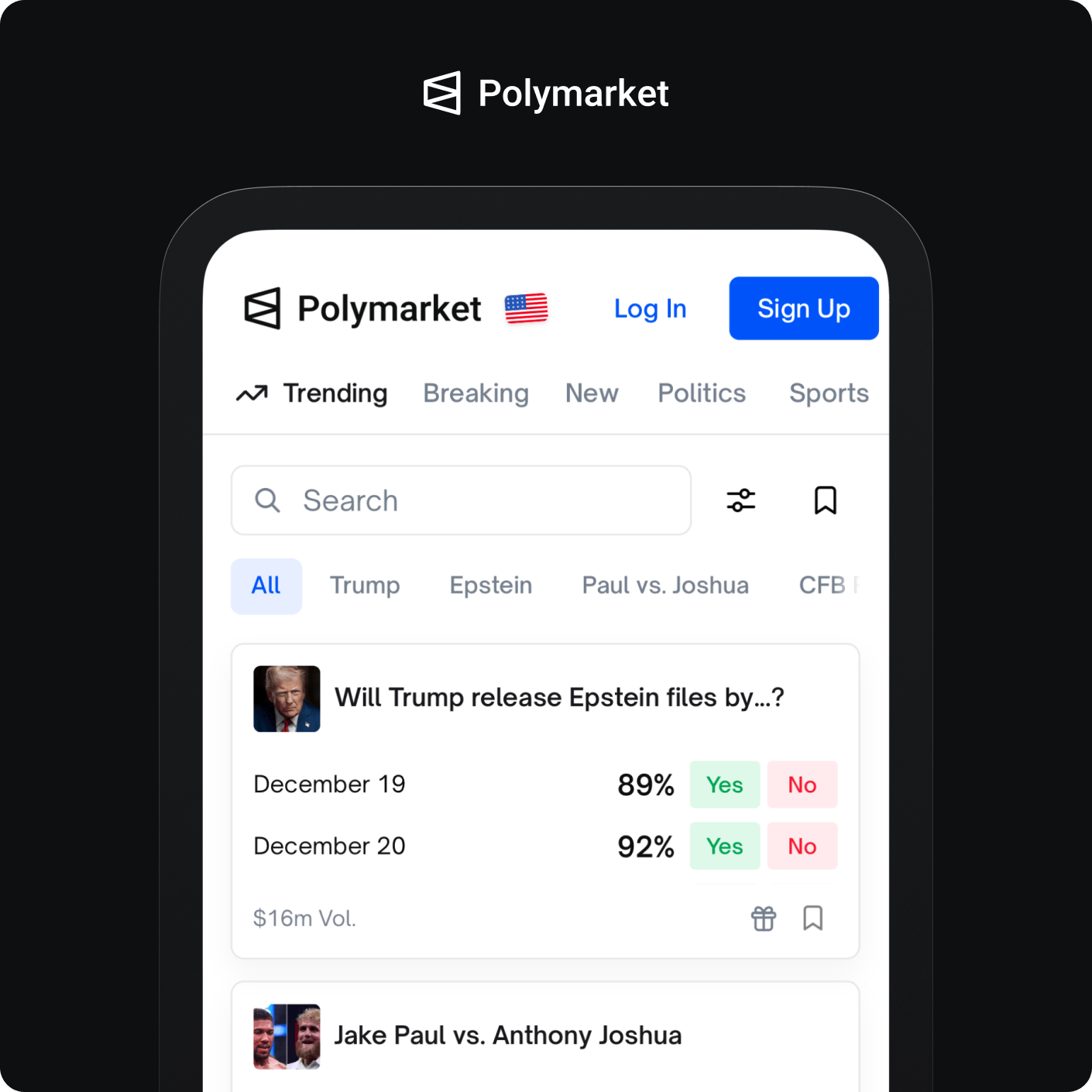

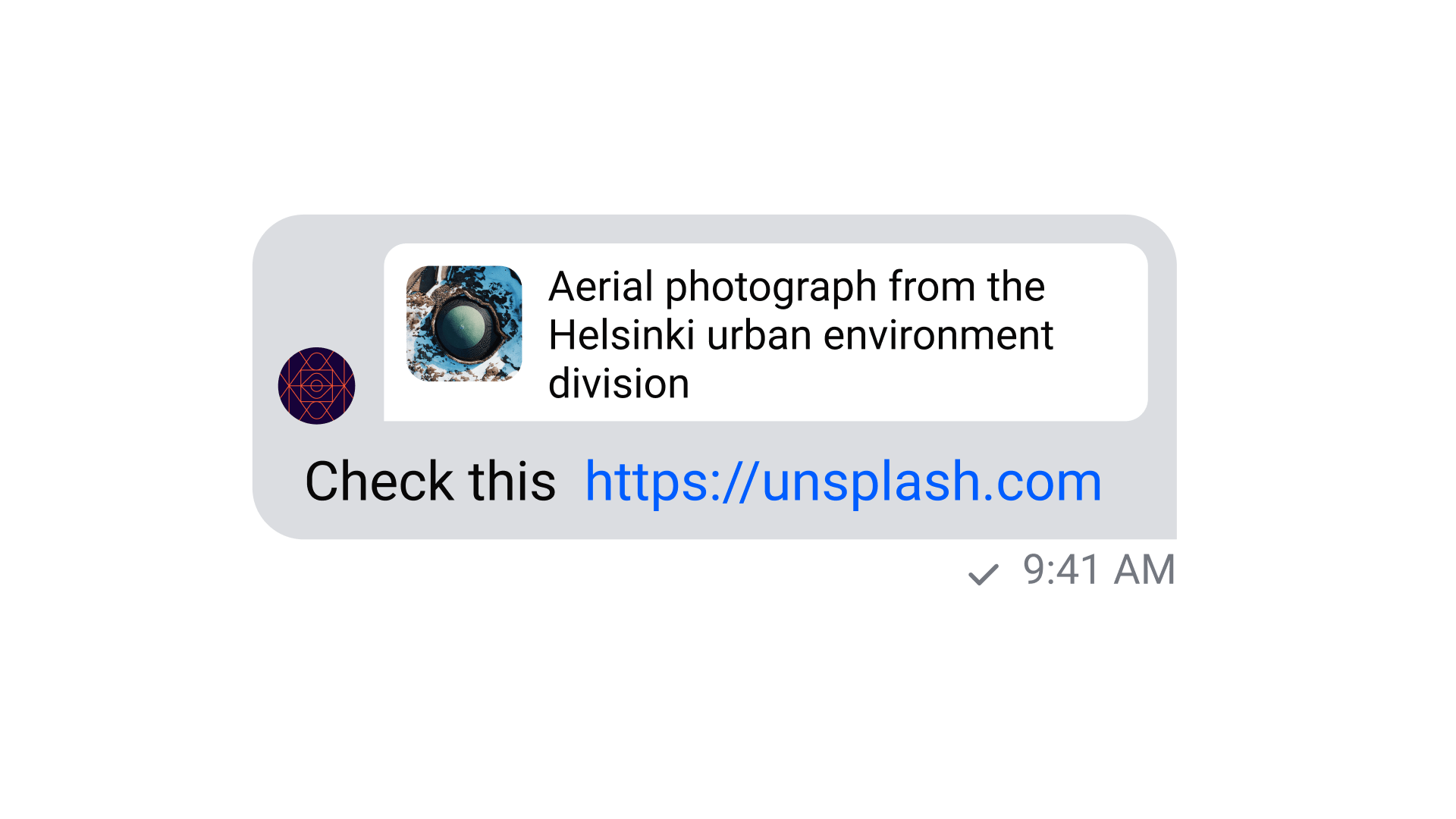

Automatically show image, text and video previews of the URLs that users are sending.

Show when a user was last active and if they are currently online. We also support user invisibility.

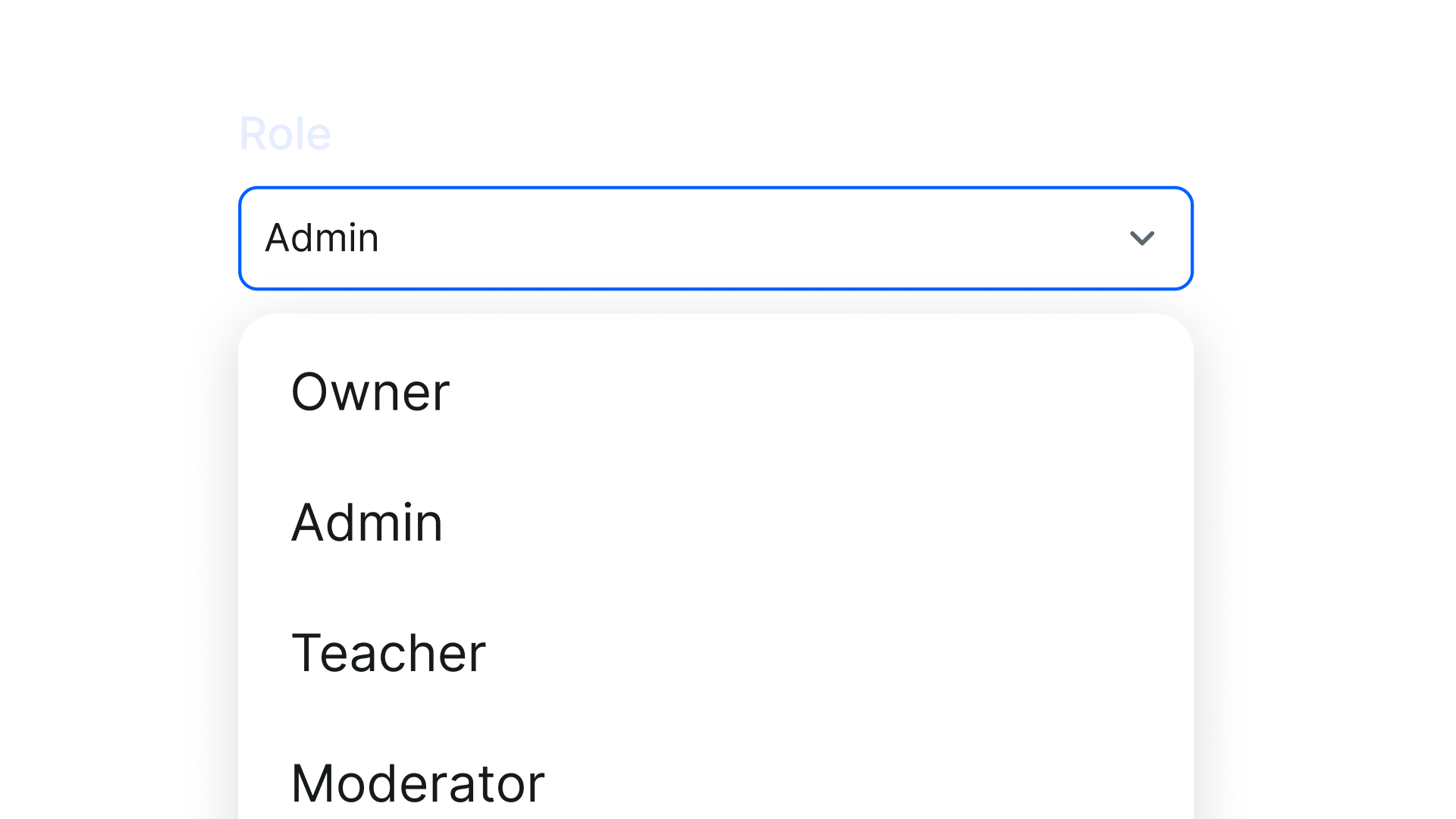

Control what regular users, administrators, moderators or custom user roles are allowed to do.

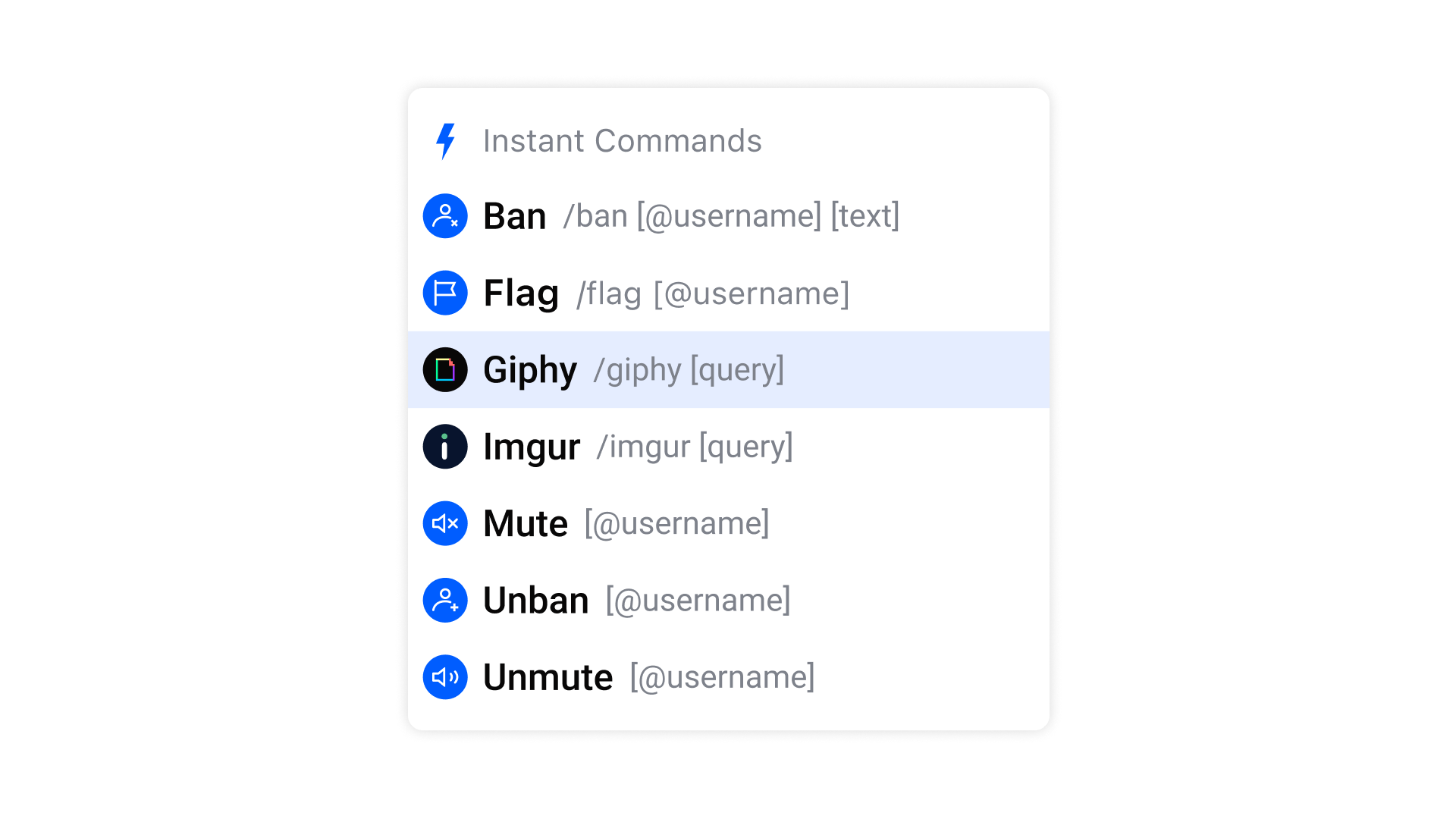

Do more than compose a message with custom slash commands that integrate with APIs.

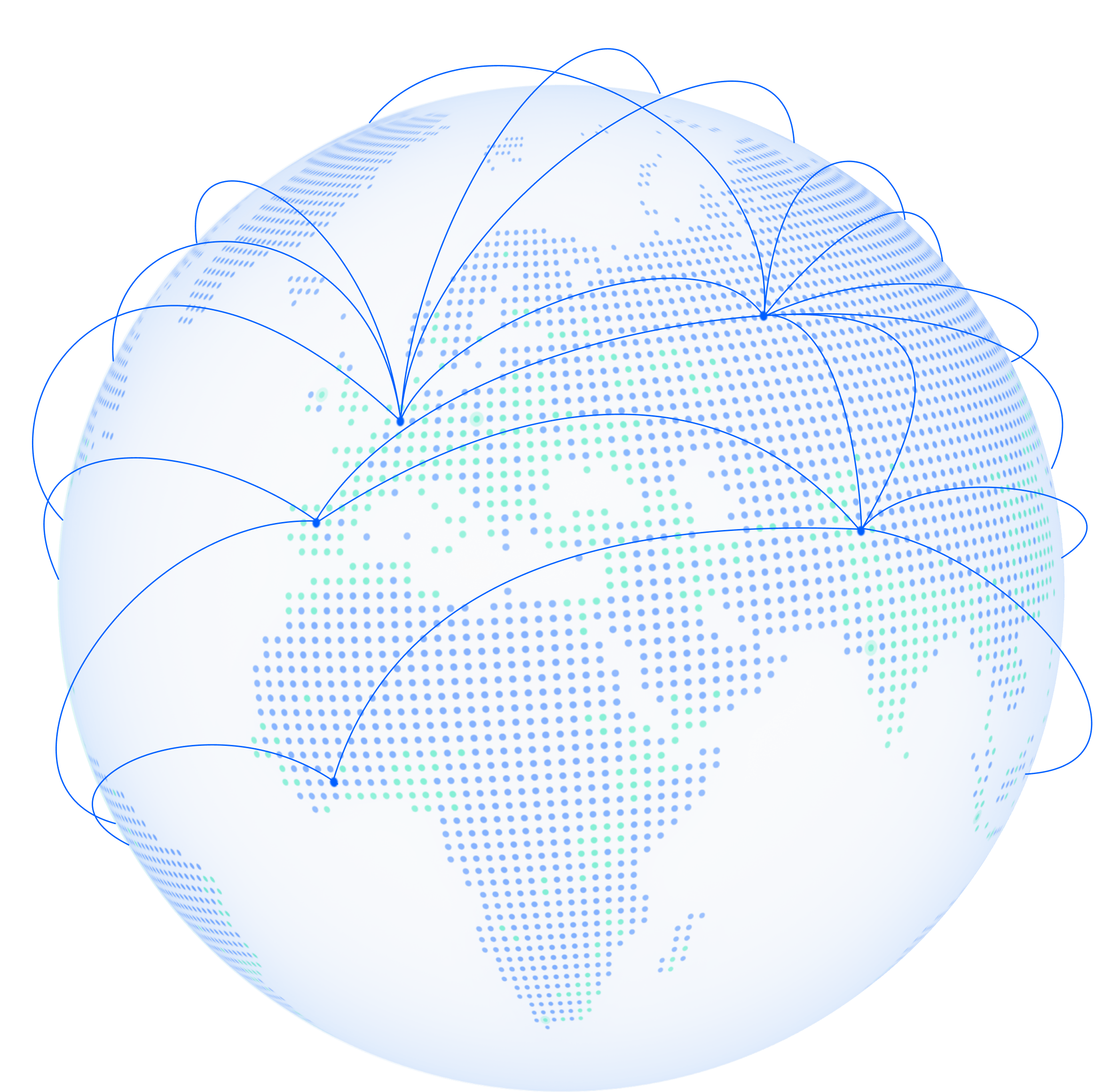

Unlike traditional cloud setups, Stream Chat’s performance isn’t tied to nearby data centers, resulting in up to 5 times lower latency and fewer errors from weak WiFi or mobile connections.

Stream is a very reliable and scalable product. I've never had any issues, downtime, or required special maintenance. The pricing is very competitive, and the support team is very helpful and responsive.

Chat is working great for us. We have many features that we didn’t have using our old chat provider. Our audience grew by 1,000%, so we have seen a steep increase in chat usage with a stable chat solution.

Stream is working great. We had so many limitations to what we wanted to do with Codepen before we found Stream. Stream unlocked fundamental activities that make Codepen what it is today

One problem with vendors claiming to provide an OOTB experience is that, at some point, you find the edge of that box. But with Stream, there is always an escape hatch at the edge of the box that prevents us from looking elsewhere, especially as we continue to create more experiences with custom and branded UI elements.

When we began our search for vendors, we found Stream. It had a great Javascript SDK and documentation that would accelerate and streamline the integration process for our customers.

Integrating Stream Chat with our existing systems was smooth, thanks to its well-documented API and support from its customer success team. This ease of integration allowed us to quickly implement the chat functionality without causing disruptions in our ongoing operations.

Instead of just telling us a price and a plan, Stream is committed to understanding our business so we can architect, build, and customize our apps exactly how we want them.

When we started the migration, we started routing the data to Stream from our previous chat provider. Stream's migration process made it really easy for us to do that. The entire migration went off without a hitch. It was phenomenal.

Our development team did two MVP builds—one with Sendbird and one with Stream. Their feedback was that the Stream SDK was easier to work with; it only took three days to complete. They liked the available functionality and thought it would be the fastest route to market.

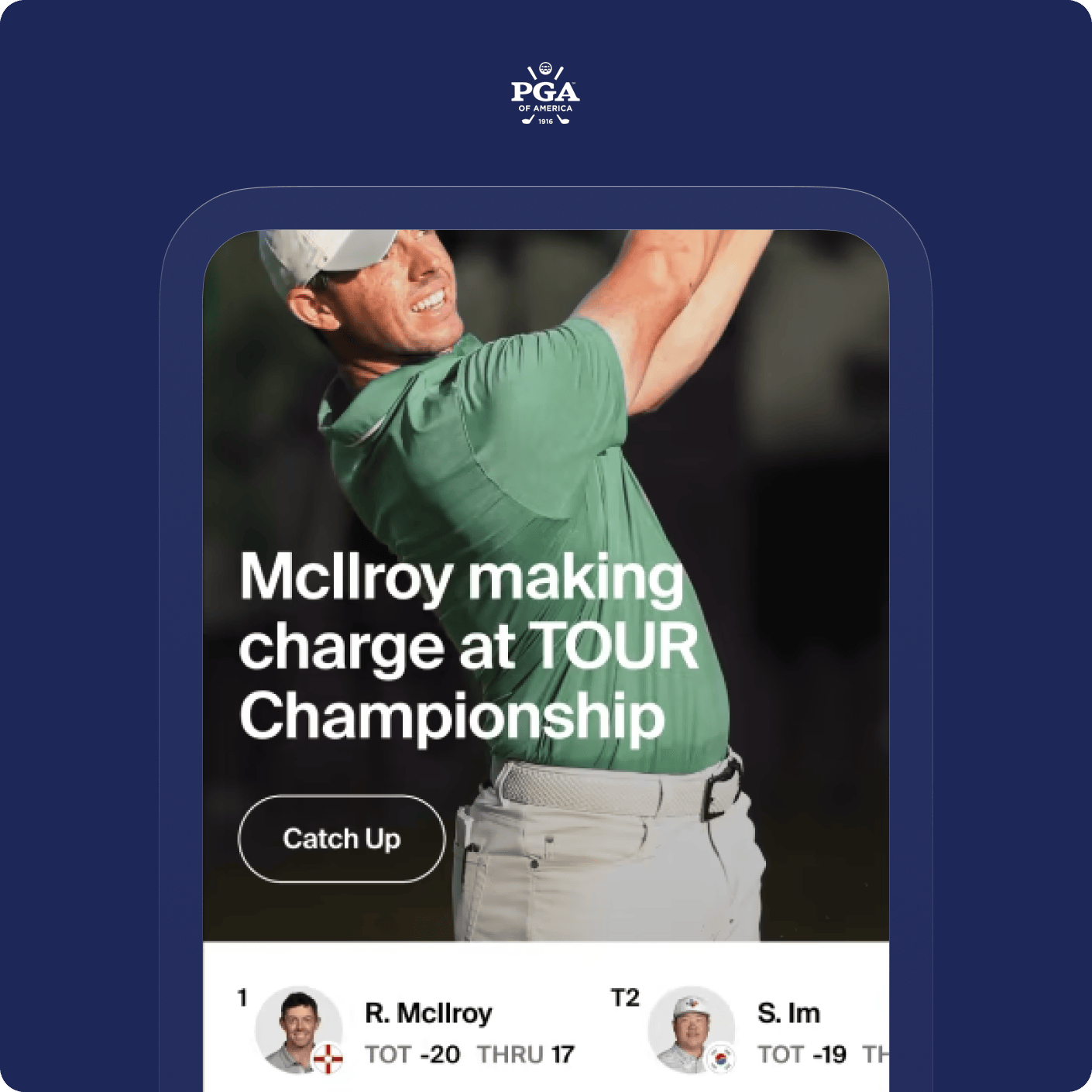

We've leveraged Stream to provide innovative audience engagement via chat, with custom reactions, verified status indicators, and user metadata to create immersive experiences. We wrapped all the features of Stream into a simple module that can be dropped onto a page to add robust chat functionality to any experience in seconds.

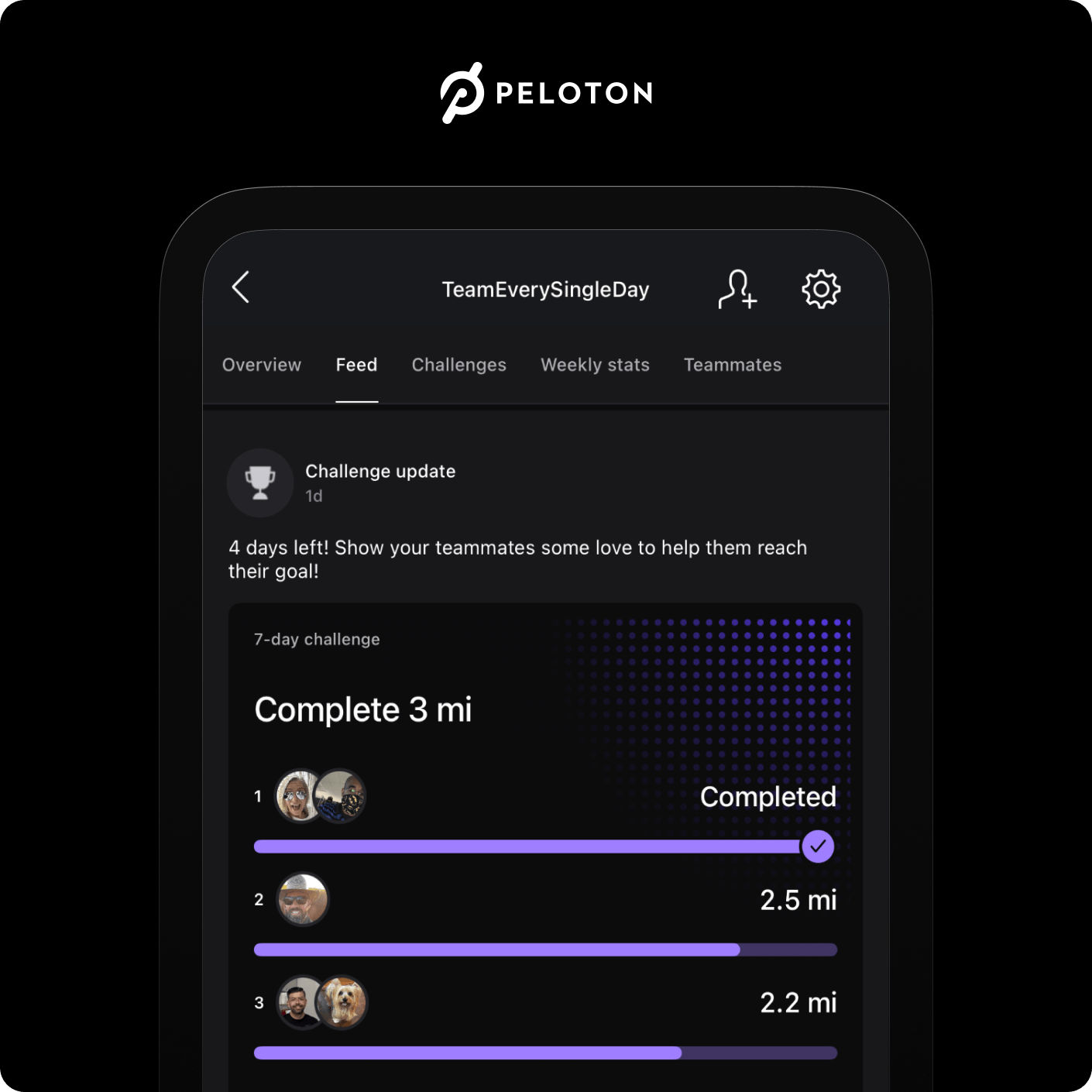

We chose Stream because of its ease of integration, impressive documentation, and robust feature catalog. It only took one developer a few hours to integrate the React Native SDK. Now, we see an average of 50% growth YoY thanks to the newfound ability for our members to communicate via Stream Chat.

Stream Chat's global edge network was a huge selling point for us. Now, we can confidently say to our customers it doesn't matter where our servers are; messages will always be delivered and feel instantaneous.

The integration with Stream Chat was remarkably quick. We rewrote the entire system, addressing not just the migration but also running both systems in parallel. The transition involved collaboration with Stream developers, ensuring a seamless process and no disruption to user experience.

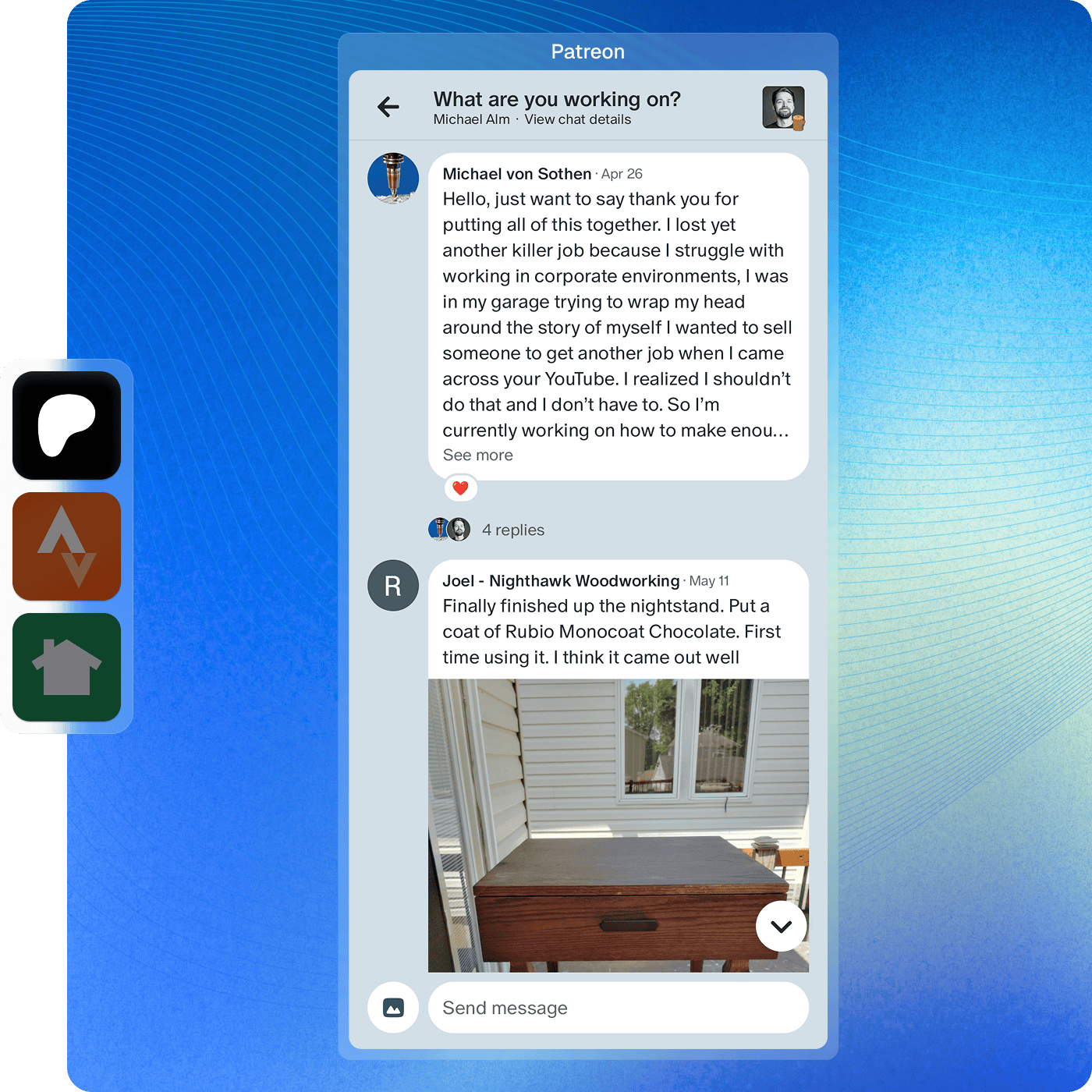

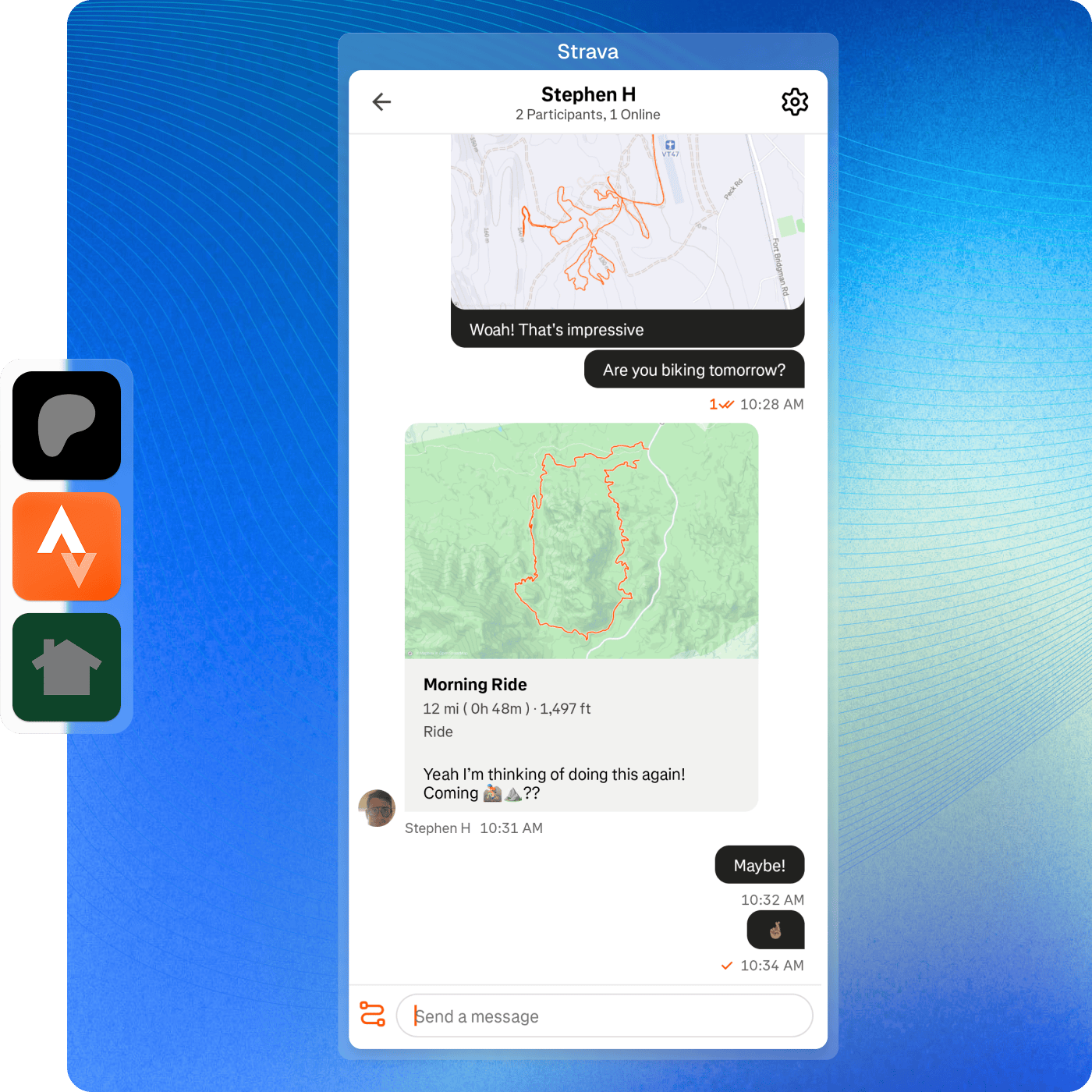

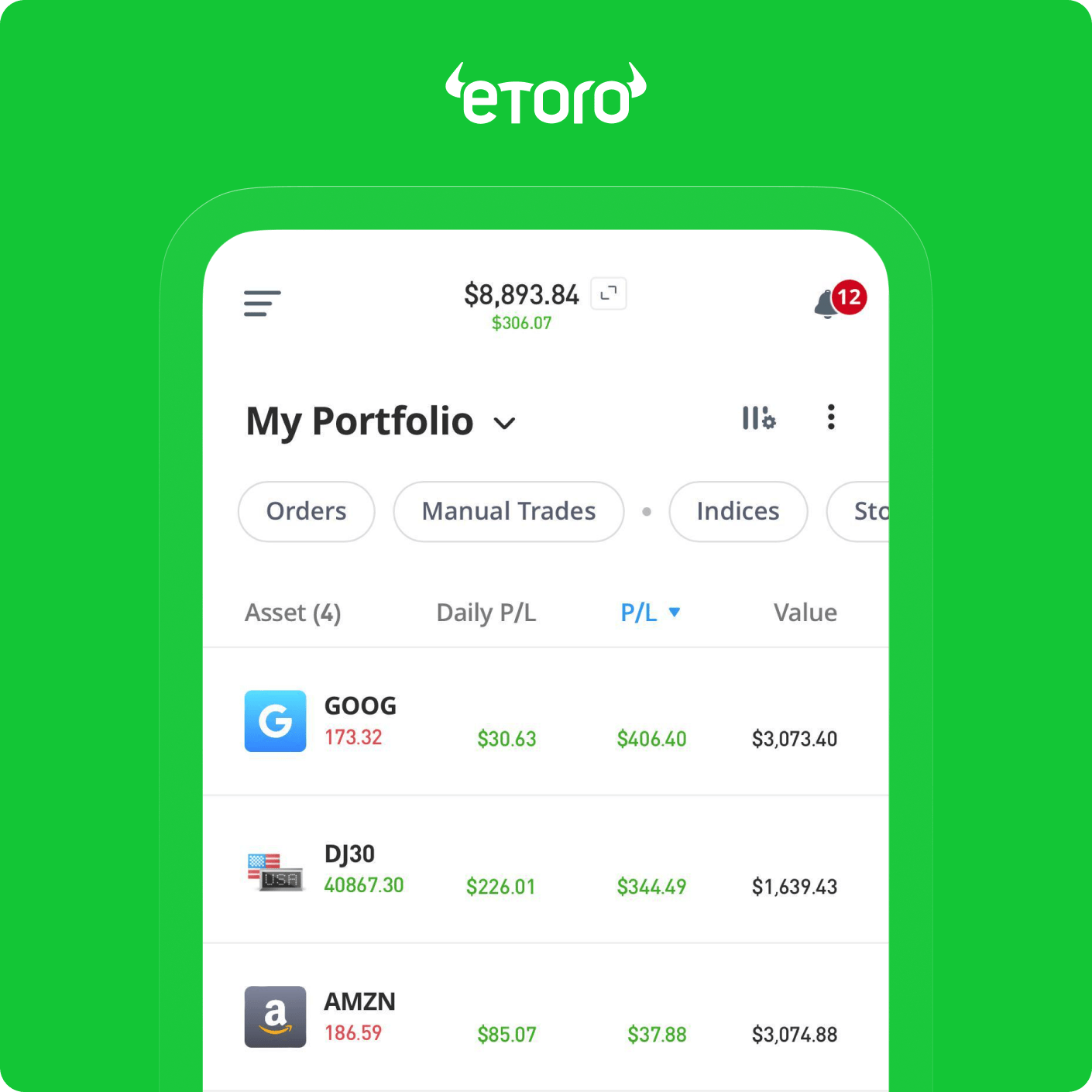

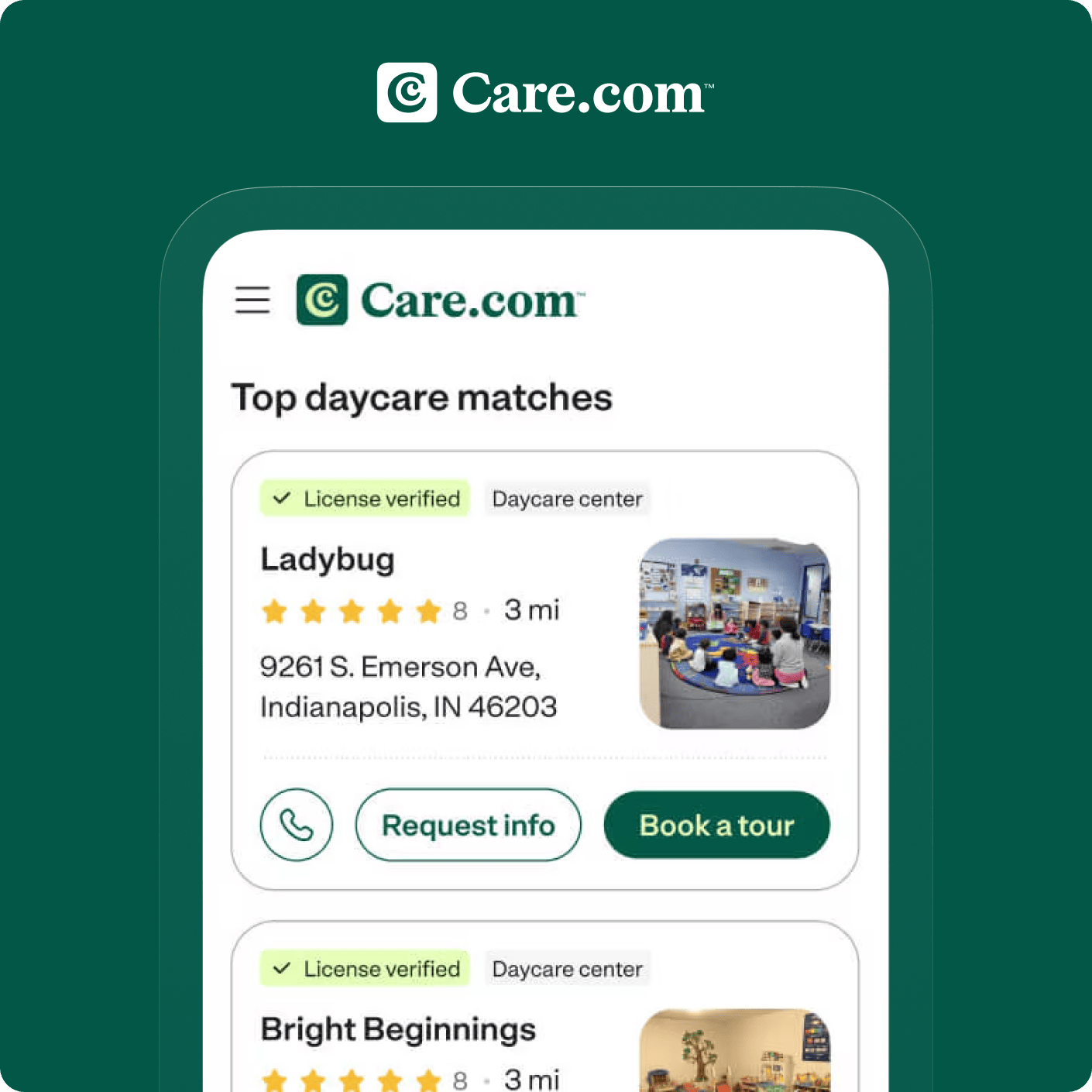

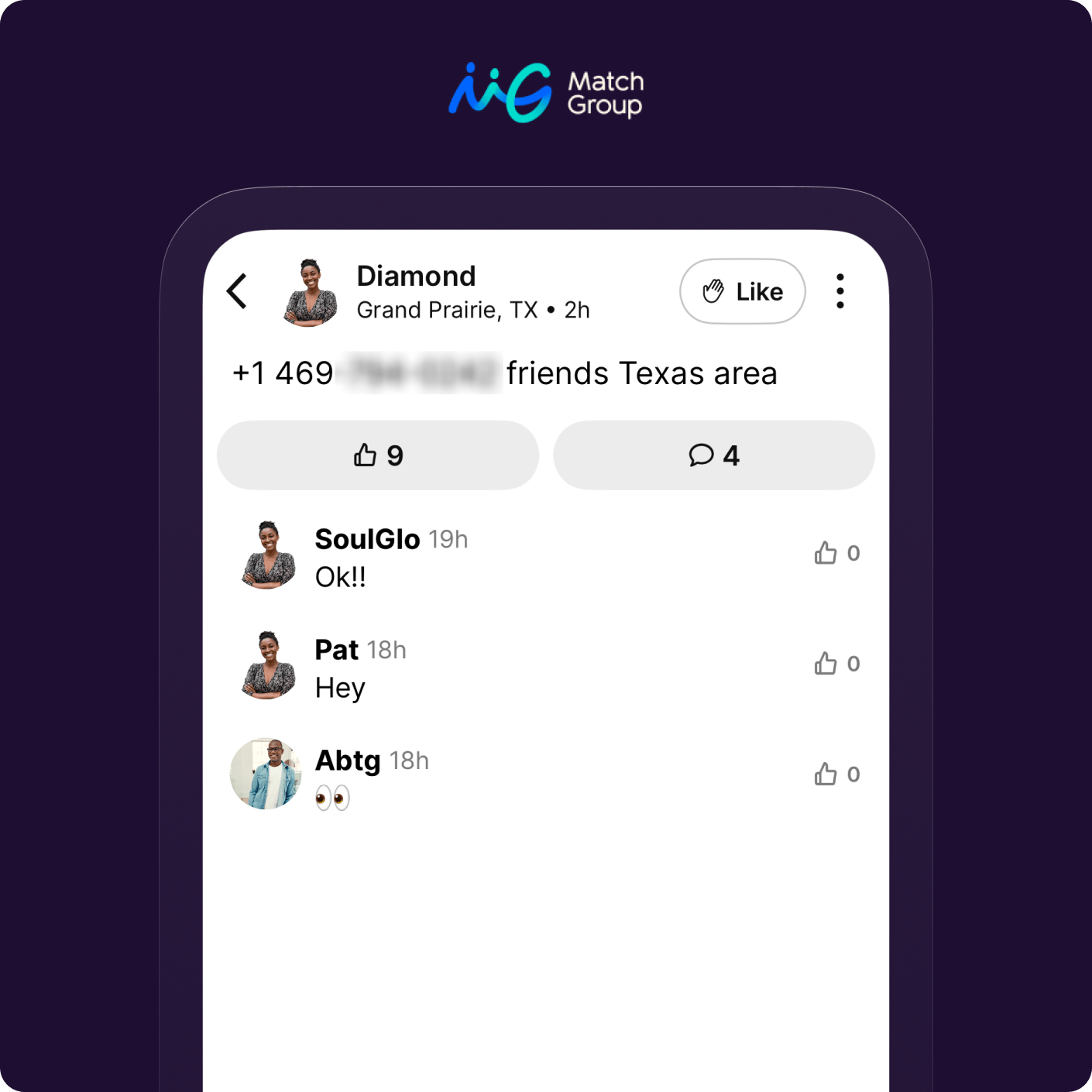

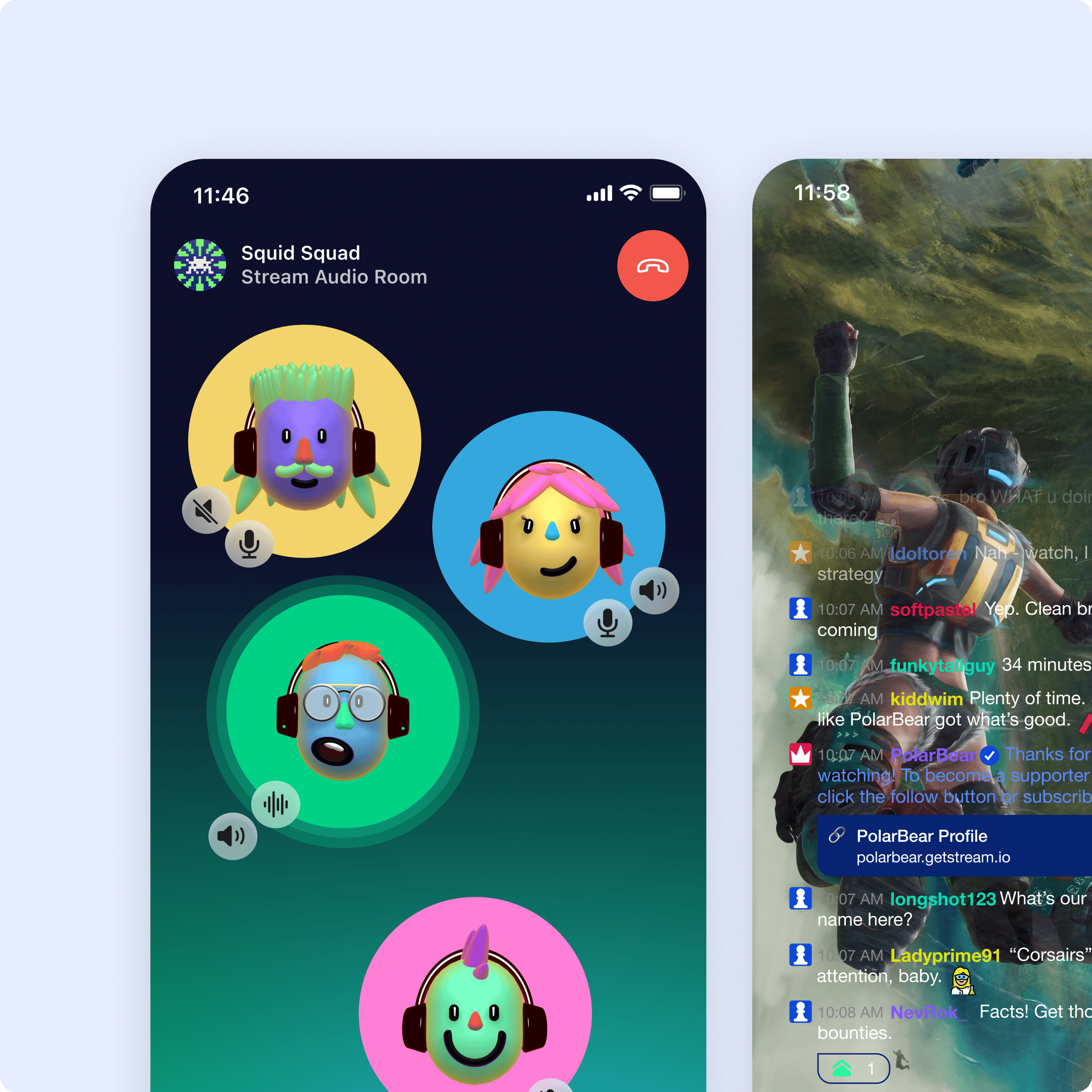

Increase real-time engagement in your web or mobile app with the Stream Chat API powering your social messaging services. Effortlessly integrate messaging and connect your users worldwide.

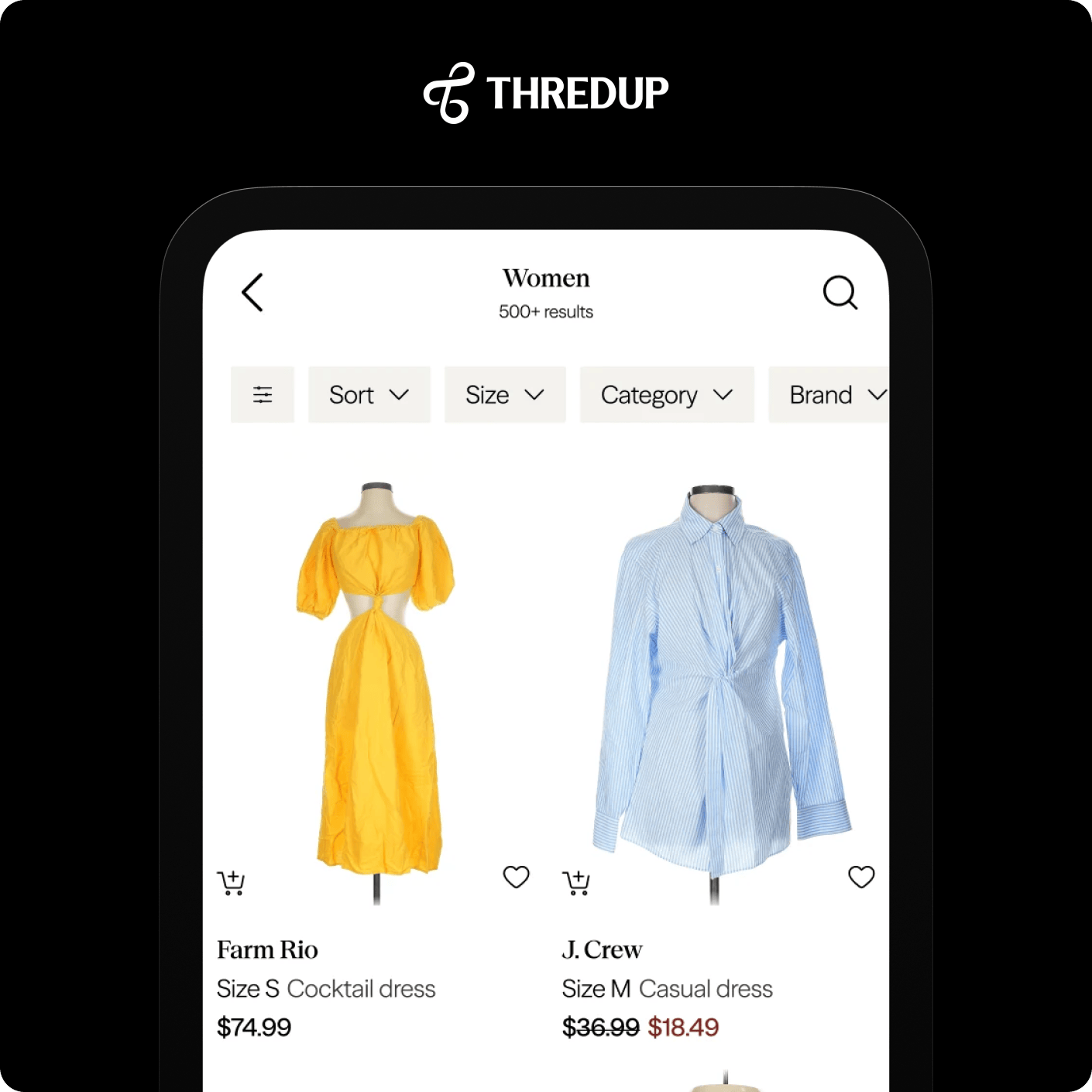

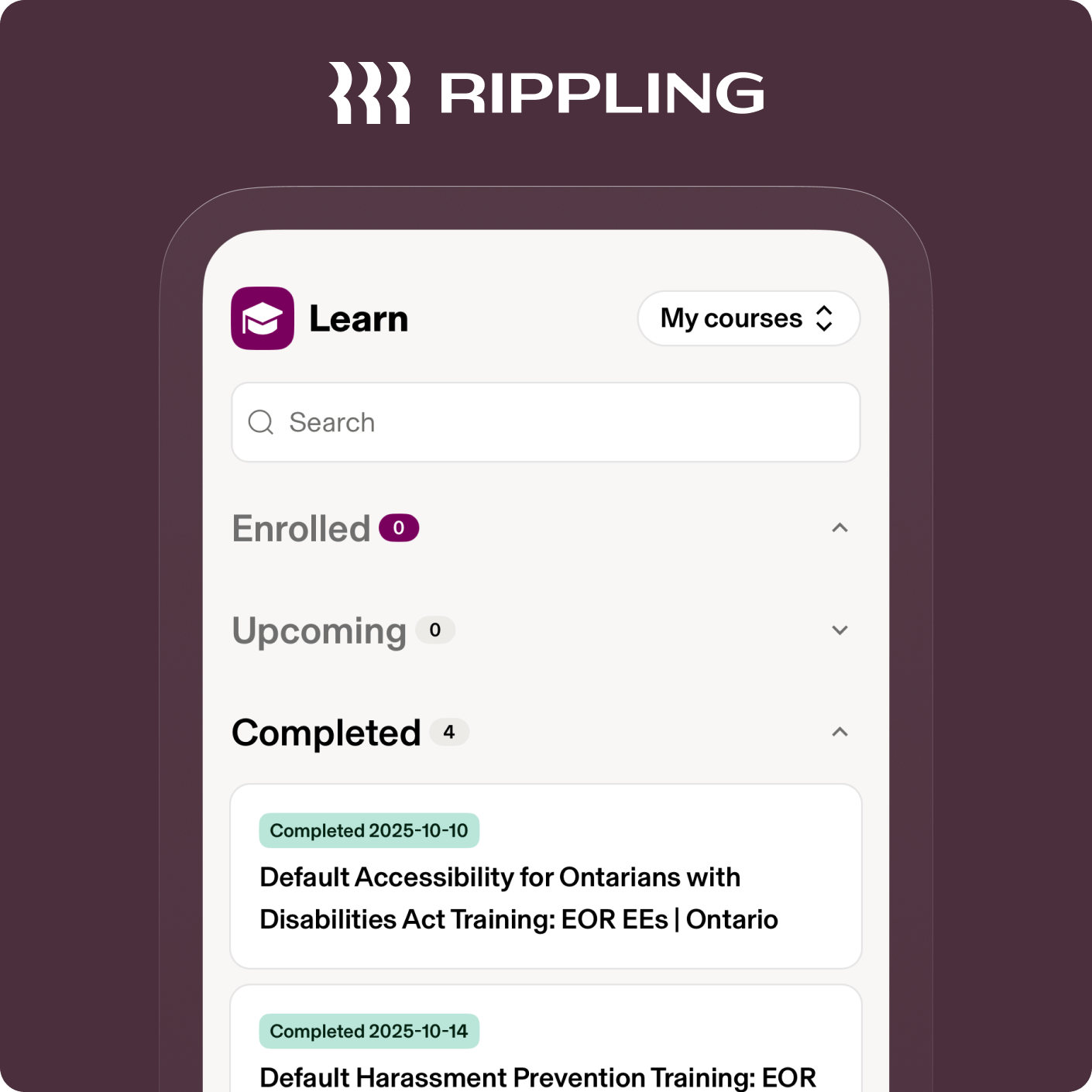

Improve community engagement or replace email for your team with Stream's in-app messaging API service. Our components and APIs allow you to build anything from a simple team chat app to a Slack alternative.

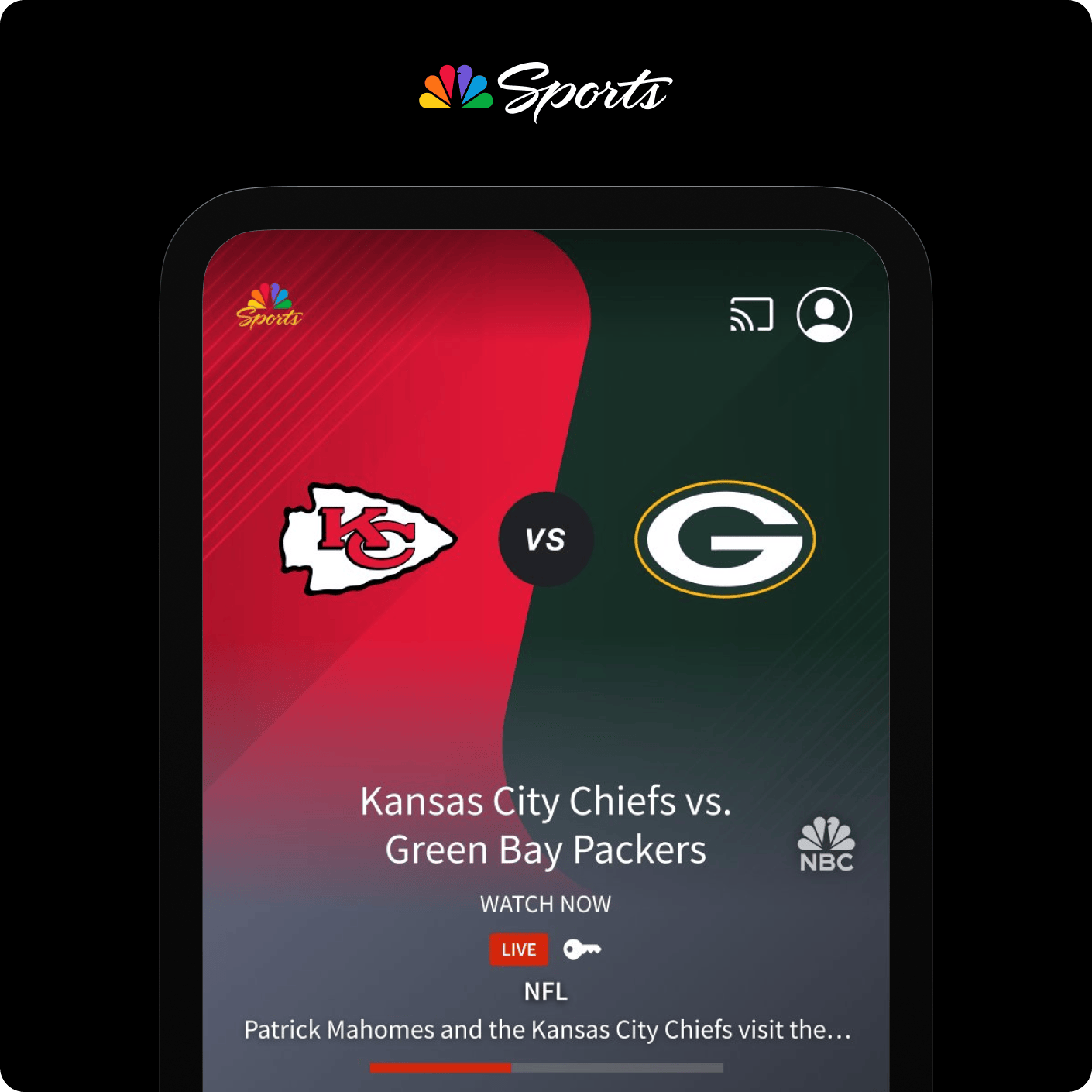

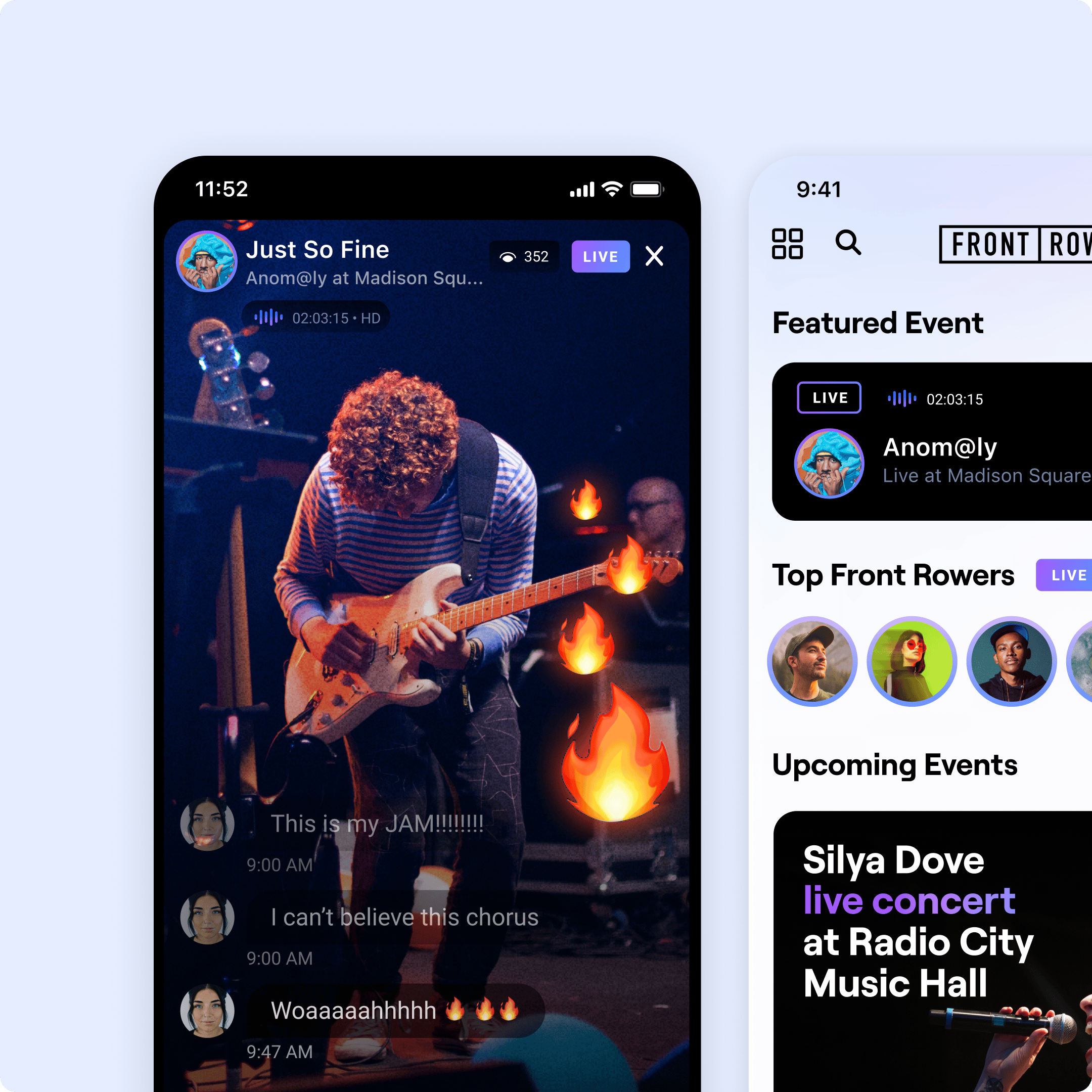

Our livestream chat allows you to capture and retain active audience engagement on your web or mobile platform by enabling your users to interact via real-time messaging.

Our platform includes direct access to the leading chat API and powerful and feature-rich SDKs for your language or framework. We are HIPAA-ready, DPF, SOC 2, and ISO 27001 certified.

Our developers are constantly iterating on our wide array of frontend and backend chat SDKs, including React, iOS (Swift), Android (Kotlin/Java), Flutter, React Native, Angular, Unity, and Unreal.

Stream Chat runs on a global edge network built for low latency and high availability. As shown on the site, we deliver millions of users with 99.999% uptime, average 5 ms response time in many cases, multi-region support, and enterprise-level SLAs.

Yes, Stream offers free migration support for 30 days (or less), including bi-directional webhook sync, data import/export assistance, and engineering help to ensure zero downtime.

Yes, Stream Chat UI Kits are fully themable and customizable, giving you complete control over layout, colors, behaviors, and interactions.

Yes, by combining Stream Chat, Video, Feeds, and AI Moderation, your app can create a complete engagement ecosystem to support your in-app community goals.

No credit card required.

If you're interested in a custom plan or have any questions, please contact us.