Build low-latency Vision AI applications using our new open-source Vision AI SDK. ⭐️ on GitHub

Multi-modal AI agents that see, hear, & remember.

Open-source. Edge-agnostic. Low-latency.

An open source framework and developer platform for building, testing, deploying, scaling, and observing agents in production.

Build fast voice agents that reason over knowledge, search files, and respond in real time using low-latency RAG pipelines.

Power interactive real-time coaching flows with live pose tracking and frame-by-frame video processor pipelines.

Build virtual try-ons, stylized scenes, or give your agents a visual identity using models like Decart Lucy.

Train and run custom CV models with Roboflow or YOLO for security monitoring, moderation, and domain-specific workflows.

Connect external APIs so agents can validate data and take real-world actions — e.g., Twilio-based phone calls and fraud response — during live conversations.

Join our

Building models, tools, or platforms that work with real-time voice or video AI?

We’re actively adding first-party integrations, co-building, and co-marketing with partners.

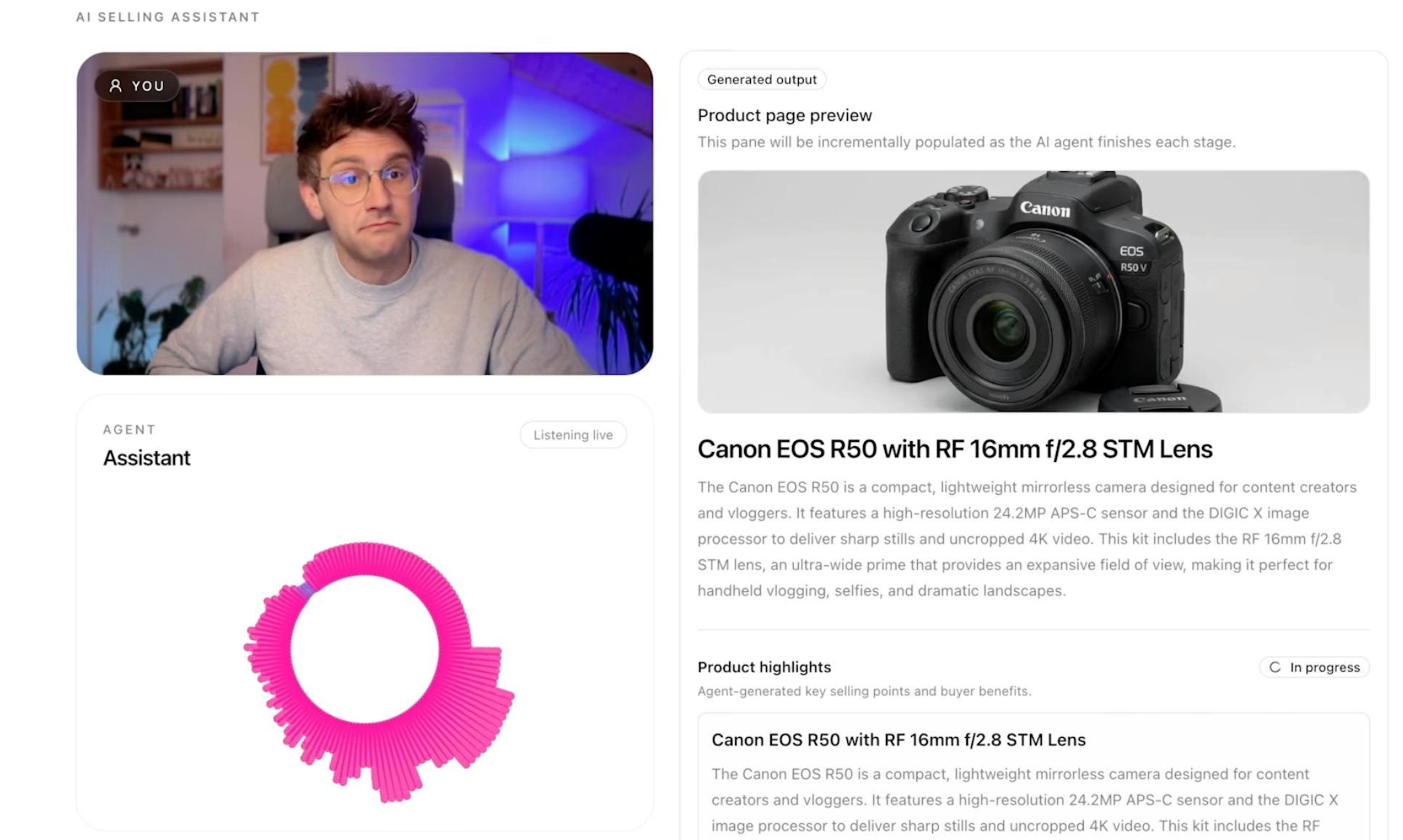

Create a product page for selling a used item that includes a product image, title, description, and a suggested price.

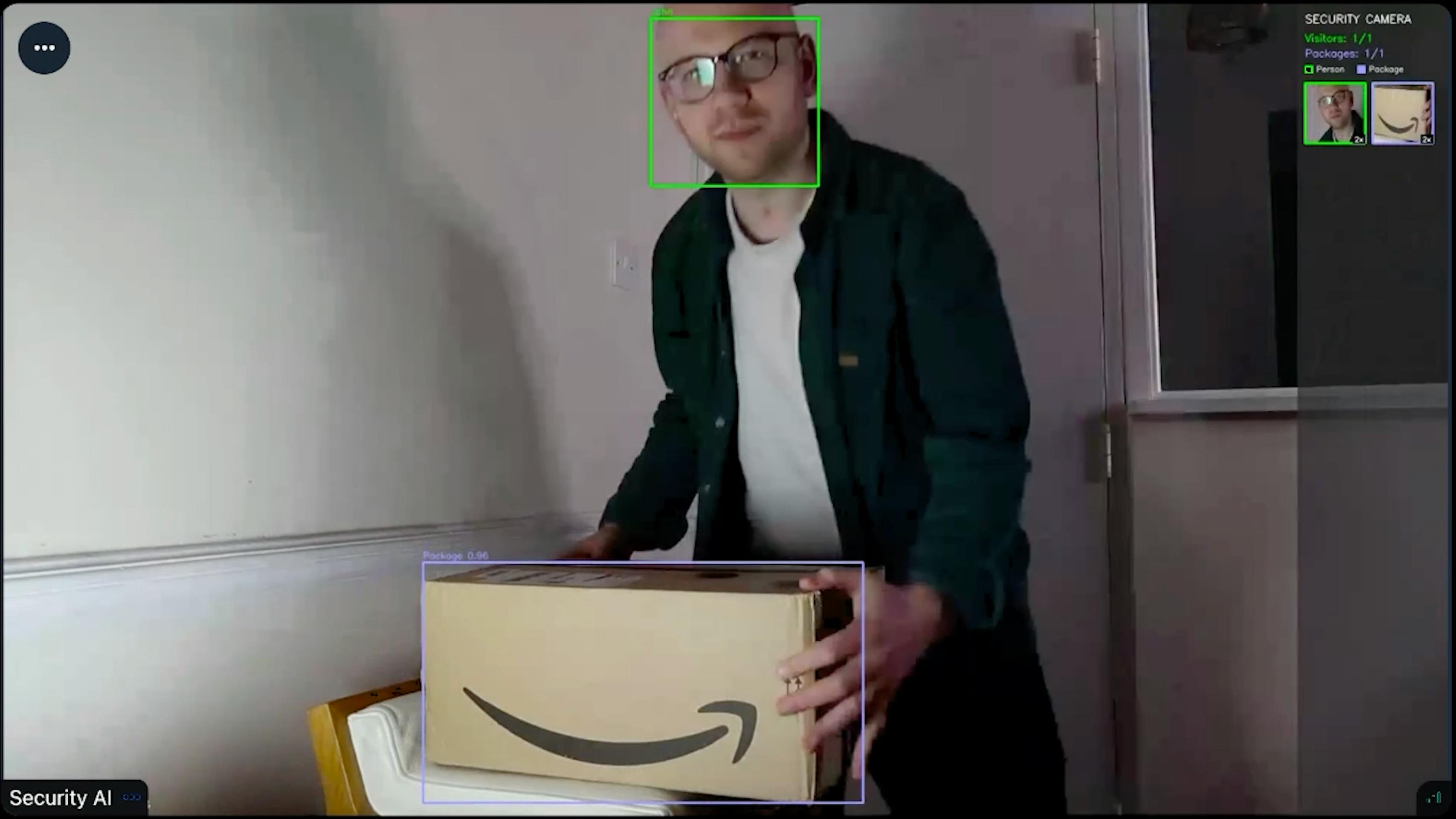

Facial recognition, package detection, automated package theft response, and posting to X.

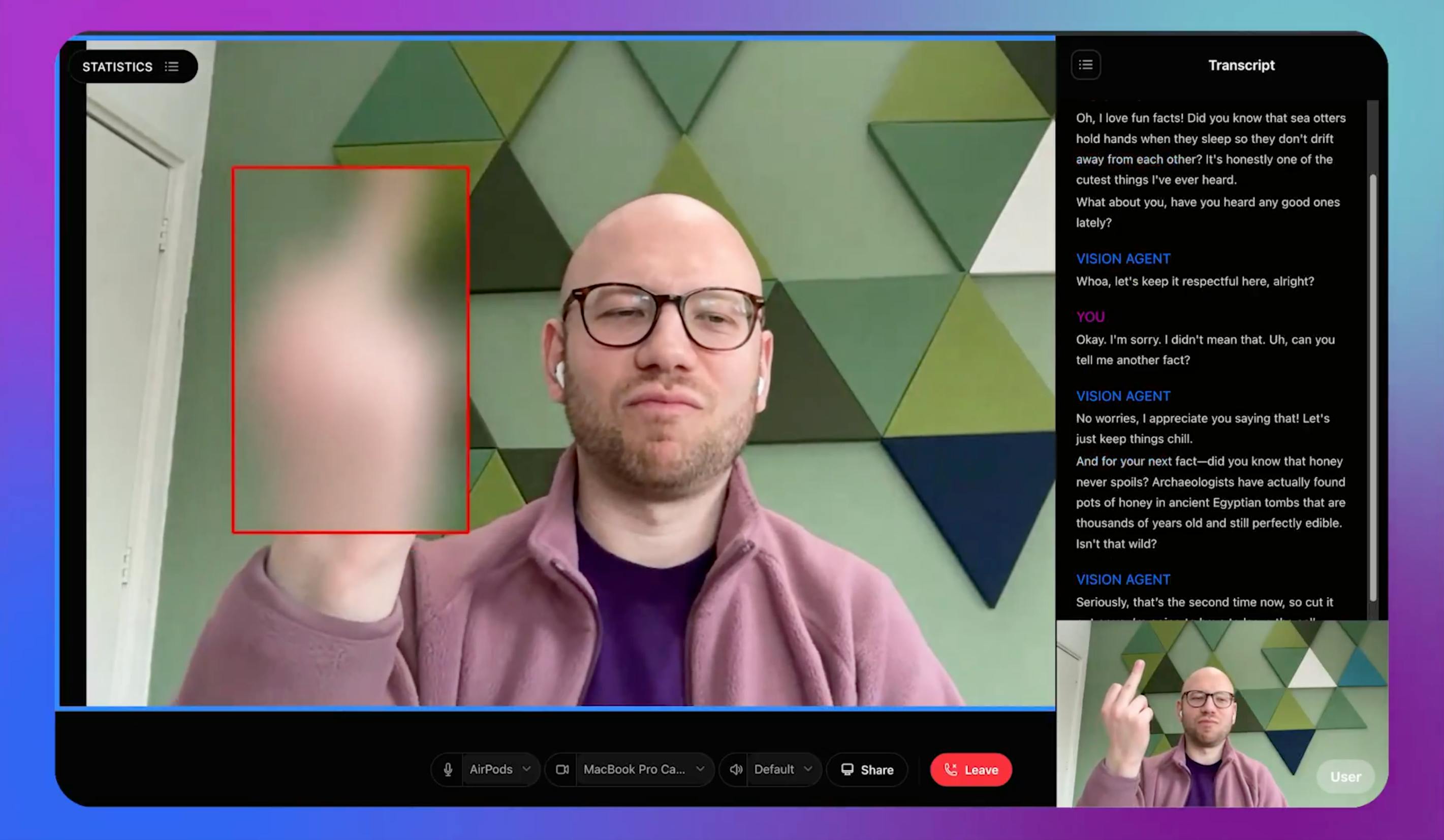

Detect and censor offensive gestures, and give three verbal warnings before kicking the user out.

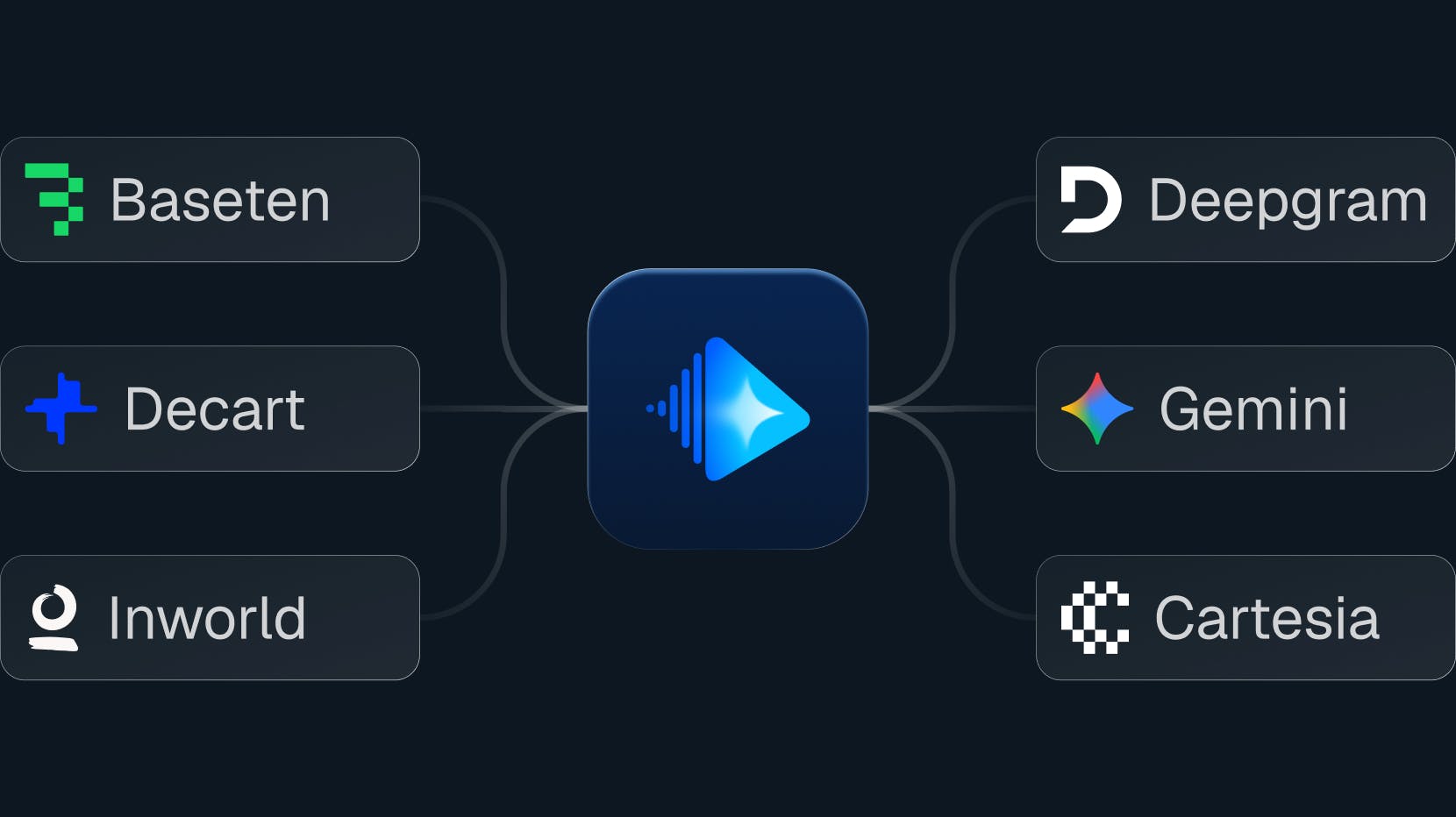

Realtime : End-to-end voice agent with multimodal support, unified under one plugin and model.

Language Models : Function calling, RAG, and full control over STT/TTS choices

Speech-to-Text : Streaming transcription, some with built-in turn detection

Text-to-Speech : Cloud and local options, from expressive to ultra-low latency

Vision & Video : Object detection, video analysis, and style transfer

Avatars : Real-time lip-synced visual characters

Turn Detection : Smart interruption handling and silence detection

Infrastructure : Self-hosted inference, model routing, and vector search

Community & Open Source

Follow Stream on X, star the Vision Agents GitHub repo, and join the discussion on Discord to try demos, share feedback, and contribute.